Apple and Google Face Pressure to Block Nudes Without Age Verification

▼ Summary

– The British government will ask Apple and Google to use algorithms to block the taking and sharing of nude photos on devices unless the user is verified as an adult.

– The proposal also seeks to prevent nude images from being displayed on screens unless adult verification, such as biometric checks, is completed.

– This approach shifts responsibility for age verification to app store platforms, requiring a one-time check with Apple or Google instead of with individual app developers.

– Apple currently offers some protections in Messages by blurring explicit images sent to children and notifying parents if they are viewed.

– The proposal is controversial due to significant privacy concerns, but it aims to address the serious problem of online child grooming and exploitation.

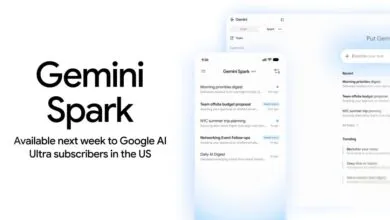

The British government is reportedly preparing to ask tech giants Apple and Google to implement measures that would prevent users from taking or viewing nude photographs on their devices unless they have been verified as adults. This proposal aims to protect children from exposure to explicit content and the risks associated with sharing such material. According to a report, officials want operating systems like iOS and Android to integrate nudity-detection algorithms that would block the capture, sharing, and display of images containing genitalia unless adult verification is confirmed. While framed as a request rather than a legal mandate for now, this move signals increasing governmental pressure on platform holders to assume greater responsibility for age-gating content.

This development aligns with a broader trend where app stores are being pushed to handle age verification, shifting the burden away from individual app developers. In the United States, similar discussions have led to the proposed App Store Accountability Act, which would require companies like Apple and Google to verify a user’s age once, then apply appropriate restrictions across all apps with age requirements. Apple has historically resisted such mandates, but proponents argue that a centralized system would enhance both security and user convenience. The UK initiative, however, goes significantly further by targeting the core functions of the device’s camera and display systems.

Currently, Apple offers some protective features within its ecosystem, particularly in the Messages app for families using iCloud Family Sharing. If a child receives a sexually explicit image, it is automatically blurred and accompanied by a warning. The child can choose to view it, but doing so triggers an additional alert explaining the content’s sensitive nature and notifies the parent designated as the group administrator. This existing framework shows a commitment to child safety, but the new government proposal would expand such protections to the entire device interface and all applications, not just messaging.

From a privacy perspective, this proposal is highly contentious. The notion of operating systems actively scanning photos and on-screen content, even if processed locally on the device, raises serious concerns about surveillance and the erosion of personal privacy. Critics argue that such invasive technology sets a dangerous precedent, potentially opening the door to broader monitoring. Implementing reliable adult verification, likely through biometric checks or official ID, also introduces risks related to data security and the creation of sensitive identity databases.

Nevertheless, the push for these measures stems from a genuine and growing crisis. Law enforcement agencies globally are reporting an increase in online grooming and exploitation, where predators impersonate peers to coerce teenagers into sharing explicit images. These situations often escalate into blackmail, with victims pressured to provide increasingly compromising material. The psychological toll is severe, and tragically, several teenage suicides have been linked to this cycle of abuse. This stark reality underscores the urgent need for effective solutions to protect young people in digital spaces.

While the specific proposal to block all nudity without verification may face substantial practical and ethical hurdles, it has succeeded in promoting a necessary public discussion. The challenge lies in balancing the imperative to safeguard children with the fundamental rights to privacy and freedom. Finding a middle ground that employs realistic, targeted interventions, rather than blanket surveillance, will be crucial. The coming days may see further details emerge as the UK government formalizes its request, placing Apple and Google at the center of a complex debate about safety, responsibility, and digital rights.

(Source: 9to5Mac)