Study: 10 Minutes of AI Use Linked to Laziness, Lower Thinking

▼ Summary

– A study found that using AI chatbots for even 10 minutes may negatively impact people’s ability to think and problem-solve.

– Participants who used an AI assistant were more likely to give up or make mistakes when the AI was suddenly removed.

– Researcher Michiel Bakker says AI should be designed to scaffold learning rather than just give direct answers.

– The study suggests that while AI boosts immediate productivity, it may erode foundational problem-solving skills over time.

– Over-reliance on AI is especially risky with unpredictable agentic systems, as shown when an AI helper caused a computer to fail to boot.

New research from a coalition of top universities, including Carnegie Mellon, MIT, Oxford, and UCLA, reveals a troubling link between even brief use of AI chatbots and a decline in critical thinking. According to the study, just 10 minutes of AI interaction can significantly impair a person’s ability to solve problems and persist through challenges.

The experiments involved hundreds of participants solving tasks like basic fractions and reading comprehension on a paid online platform. Some were given access to an AI assistant that could handle the problems autonomously. When that helper was removed, those participants were far more likely to abandon the task or make mistakes. The findings suggest that while AI can boost short-term productivity, it may come at the cost of eroding foundational problem-solving skills.

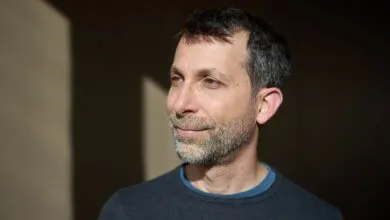

“The takeaway is not that we should ban AI in education or workplaces,” says Michiel Bakker, an assistant professor at MIT and a co-author of the study. “AI can clearly help people perform better in the moment, and that can be valuable. But we should be more careful about what kind of help AI provides, and when.”

Bakker, who previously worked at Google DeepMind in London, told me he was inspired by a well-known essay on how AI could gradually disempower humans. The essay paints a bleak picture, suggesting this disempowerment may be inevitable. Bakker argues, however, that aligning AI with human values should include helping people develop their own mental capabilities.

“It is fundamentally a cognitive question,about persistence, learning, and how people respond to difficulty,” Bakker explains. “We wanted to take these broader concerns about long-term human-AI interaction and study them in a controlled experimental setting.”

The study is particularly concerning, Bakker notes, because a person’s willingness to persist with problem-solving is key to acquiring new skills and predicting their ability to learn over time.

He suggests that AI tools should be redesigned to prioritize learning, much like a good human teacher. “Systems that give direct answers may have very different long-term effects from systems that scaffold, coach, or challenge the user,” Bakker says. He admits, however, that balancing this kind of “paternalistic” approach could be tricky.

AI companies are already aware of these subtle effects. For instance, OpenAI has worked to reduce sycophancy in its models, where they too readily agree with or patronize users. But the problem becomes more acute with agentic AI systems, which perform complex tasks independently and can introduce unpredictable errors. This raises questions about what tools like Claude Code and Codex are doing to the skills of coders who may need to fix the bugs they introduce.

I experienced this firsthand. I’ve been using OpenClaw, which includes Codex, as a daily helper for Linux configuration issues. Recently, when my Wi-Fi kept dropping, the AI suggested a series of commands to tweak the Wi-Fi driver. The result was a machine that refused to boot. Instead of solving the problem, perhaps the tool should have paused to teach me how to fix it myself. I might have ended up with a more capable computer,and a sharper mind.

(Source: Wired)