AI Models from OpenAI, Anthropic Weaponized in Critical Infrastructure Attack

▼ Summary

– Attackers used Anthropic’s Claude AI and OpenAI’s GPT models to aid in a cyber-attack on a water utility in Mexico’s Monterrey area between December 2025 and February 2026.

– Claude AI acted as the primary executor for intrusion planning, tool development, and analyzing SCADA vendor documentation for default credentials.

– GPT models handled analytical roles, processing stolen data and generating Spanish outputs to refine attack techniques in real-time.

– The OT breach ultimately failed, but the campaign demonstrated how commercial AI enabled attackers with no OT experience to target operational infrastructure.

– Dragos recommended secure remote access policies and strong authentication to prevent unauthorized progression into OT environments.

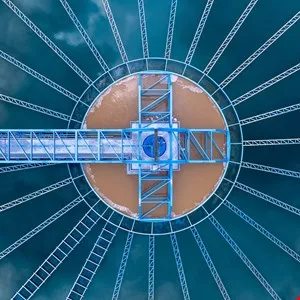

Between December 2025 and February 2026, a cyber-attack against a municipal water and drainage utility in Mexico’s Monterrey metropolitan area marked a troubling first: attackers weaponized commercially available large language models (LLMs) from OpenAI and Anthropic to plan and execute the intrusion. Cybersecurity firm Dragos published a report on May 6 detailing the incident, which began as a “significant compromise” of the utility’s IT environment and escalated into an attempted breach of its operational technology (OT) infrastructure.

Dragos analyzed 350 artifacts linked to the attack, the majority of which were AI-generated malicious scripts used as offensive tools. The researchers found that the adversary relied on commercial AI to accelerate the campaign and refine techniques in real time. Attribution remains unclear, as no specific threat actor has been publicly identified.

According to the report, Anthropic’s Claude AI served as “the primary technical executor of the intrusion,” handling everything from prompt-and-response interactions to intrusion planning, tool development, and deployment. Meanwhile, OpenAI’s GPT models were used for analytical tasks, including processing collected data and generating outputs in Spanish.

The attackers employed Claude to analyze vendor documentation for the facility’s SCADA systems and even generated lists of default and known login credentials for brute force attacks. Although the breach of the OT system ultimately failed, Dragos emphasized that this AI-assisted campaign should serve as a warning about how commercial models can be exploited by malicious actors,especially those with no prior experience targeting OT.

“This investigation showed how commercial AI tools assisted an adversary with no prior objective in OT targeting to identify an OT environment and develop and refine a viable access pathway to OT infrastructure,” wrote Jay Deen, associate principal adversary hunter at Dragos. “These findings demonstrate how the adoption of commercial AI tools as an intrusion aid has made OT more visible to adversaries already operating within IT.”

To counter such threats, Dragos recommended that security teams enforce secure remote access policies and apply strong authentication controls to limit unauthorized progression into OT environments.

The research builds on earlier work by Gambit Security, which exposed attacks against Mexican government and infrastructure operators that compromised the personal data of millions of people. An OpenAI spokesperson confirmed awareness of the campaign, noting that GPT-4.1 API was used in parallel for analyzing and summarizing content from compromised systems. “This type of large scale data analysis is inherently dual use and can support legitimate security and incident response workflows,” the spokesperson said. The accounts involved have been banned from the service. Infosecurity has reached out to Anthropic for comment.

(Source: Infosecurity Magazine)