Meta signs deal for millions of Amazon AI chips

▼ Summary

– Meta signed a deal to use millions of AWS Graviton CPUs to power its AI needs, marking a major win for Amazon’s homegrown chips.

– Graviton is an ARM-based CPU designed for AI compute tasks like real-time reasoning and multi-step agent coordination, unlike GPUs used for training models.

– The deal shifts Meta’s spending back to AWS, after it signed a $10 billion deal with Google Cloud in August.

– AWS announced the deal just as Google Cloud Next conference ended, appearing to taunt its rival.

– The deal showcases AWS’s CPUs as a competitor to Nvidia’s Vera CPU, with Amazon selling access only through its cloud service.

Amazon has secured a significant win over its cloud rivals, landing a major deal with Meta that will see the social media giant deploy millions of AWS Graviton chips to fuel its expanding artificial intelligence operations. The announcement, made by Amazon on Friday, underscores the growing importance of custom silicon in the cloud computing wars.

It is critical to understand that the AWS Graviton is an ARM-based CPU, not a GPU. While graphics processing units remain the dominant hardware for training large language models, the rise of AI agents is shifting demand toward different types of processors. These agents generate compute-heavy tasks such as real-time reasoning, code generation, and complex multi-step coordination. Amazon says its latest Graviton iteration was purpose-built to handle these exact AI inference workloads.

This partnership effectively redirects a portion of Meta’s massive spending back to Amazon Web Services, away from competitors like Google Cloud. Just last August, Meta inked a ten-year, $10 billion agreement with Google Cloud, despite having historically been a primary AWS customer that also utilized Microsoft Azure.

The timing of Amazon’s announcement is telling. It arrived just as the Google Cloud Next conference wrapped up, a move that feels like a deliberate jab at its primary cloud competitor. Google, which also develops its own custom AI chips, unveiled new versions of its hardware during that very event.

Amazon does produce its own AI GPU, the Trainium, which handles both training and inference stages. However, Anthropic recently commandeered a vast portion of those chips. Earlier this month, the Claude maker committed to spending $100 billion over ten years on AWS, with a heavy focus on Trainium. In exchange, Amazon agreed to invest an additional $5 billion in Anthropic, bringing its total investment to $13 billion.

The Meta deal allows Amazon to showcase a marquee AI customer as a proof point for its homegrown CPUs. These chips compete directly with Nvidia’s new Vera CPU, which is also ARM-based and designed for agentic AI workloads. The key differentiator is that Nvidia sells its hardware to enterprises and cloud providers, including AWS, while Amazon only offers access to its chips through its cloud service.

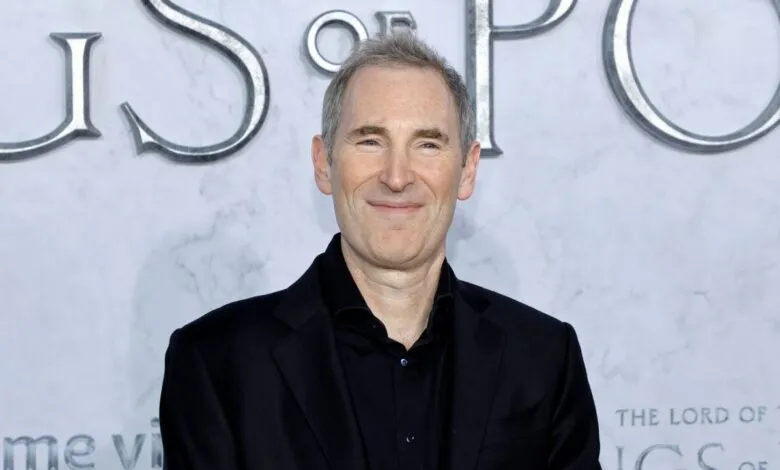

Earlier this month, Amazon CEO Andy Jassy took direct aim at Nvidia and Intel in his annual shareholder letter. He argued that enterprises demand better price-performance ratios for AI, and he intends to win deals on that basis. This places immense pressure on Amazon’s internal chip design team to deliver, a group we recently toured in an exclusive visit to their lab.

(Source: TechCrunch)