Google Cloud unveils new AI chips to rival Nvidia

▼ Summary

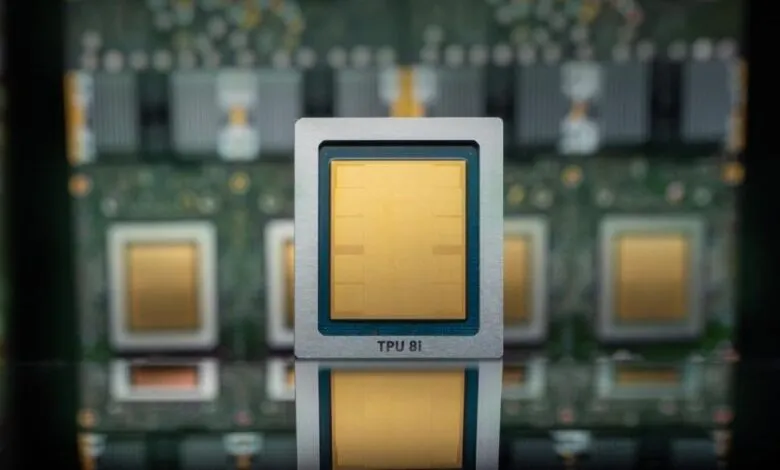

– Google Cloud announced its eighth-generation TPUs are split into two specialized chips: the TPU 8t for model training and the TPU 8i for inference, which is the ongoing usage of models after training.

– The new TPUs offer significant performance improvements, including up to 3x faster training, 80% better performance per dollar, and the ability to link over 1 million chips in a single cluster for greater efficiency.

– Google positions these custom chips as a supplement to, not a replacement for, Nvidia GPUs in its cloud, and it will also offer Nvidia’s latest Vera Rubin chip later this year.

– While cloud providers like Google, Amazon, and Microsoft building their own AI chips could eventually reduce reliance on Nvidia, the current market dominance of Nvidia, valued near $5 trillion, remains strong.

– Google is collaborating with Nvidia to enhance networking efficiency in its cloud, specifically by working to improve the open-source Falcon networking software.

Google Cloud has introduced its eighth-generation custom AI processors, splitting the architecture into two distinct chips. The new TPU 8t is specifically engineered for AI model training, while the TPU 8i targets the inference phase, which is the process of running models after they have been deployed. According to the company, this new generation delivers substantial performance gains, including AI training speeds up to three times faster than its predecessors and an 80% improvement in performance per dollar. A key advancement is the ability to link over one million of these processors into a single, massive cluster, promising customers significantly greater computational power with reduced energy consumption and lower costs.

This move represents a strategic expansion of Google’s in-house silicon capabilities, but it does not signal a wholesale shift away from industry leader Nvidia. Like other major cloud providers such as Amazon and Microsoft, Google is using its custom chips to supplement, not replace, the Nvidia-based systems available on its platform. In a clear demonstration of this hybrid approach, Google has committed to offering Nvidia’s forthcoming Vera Rubin chip in its cloud later this year. The long-term vision for hyperscalers involves building a robust ecosystem where enterprise AI workloads increasingly migrate to their clouds and are optimized for their proprietary hardware, potentially reducing reliance on external suppliers over time.

However, betting against Nvidia’s dominance remains a risky proposition. The chipmaker’s market valuation now approaches $5 trillion, a testament to its entrenched position. As industry analyst Patrick Moorhead noted, predictions from as far back as 2016 that Google’s TPUs would challenge Nvidia have not materialized as expected. In fact, Google’s own growth as an AI cloud provider could drive more business to Nvidia, as a rising tide of AI adoption lifts demand for all capable hardware. The relationship is collaborative as well as competitive; Google has announced a partnership with Nvidia to enhance the efficiency of Nvidia systems within its cloud infrastructure. This joint engineering effort focuses on improving Falcon, a software-based networking technology Google open-sourced in 2023 through the Open Compute Project.

(Source: TechCrunch)