Build a Robot to Truly Understand Yourself

▼ Summary

– The human self is fundamentally dual in nature, being both the perceiver (“I”) and the content of perception (“me”), as described by William James.

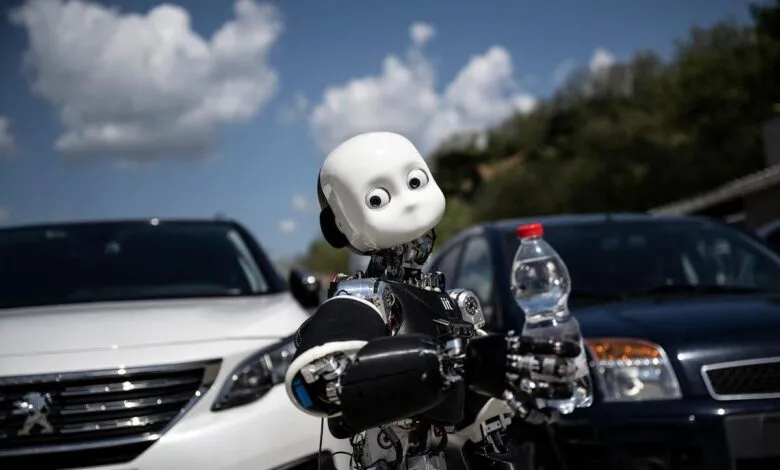

– A foundational aspect of the self is embodiment, suggesting a disembodied AI could not have a human-like self, but a physical robot potentially could.

– Neuroscientific and developmental evidence shows the self is not a single inner entity but a virtual model constructed from integrated brain networks, starting with a minimal self based on body ownership and agency.

– Researchers are using a synthetic approach, building robots with bodies and sensors, to understand and test theories of how a minimal self (distinguishing self from other) is constructed.

– While robots can model aspects of the self like body ownership and agency, the question of whether they could have subjective experience remains deeply challenging and unresolved.

The quest to understand the self, that fundamental sense of “I” and “me,” has captivated philosophers and scientists for centuries. William James’s classic description of the self as both the perceiver and the perceived remains a central mystery. Today, a novel approach is emerging to unravel this enigma: building synthetic selves in robots. This synthetic methodology offers a powerful tool for deconstructing the complex human experience of selfhood by attempting to reconstruct its core components in an artificial system. Rather than relying solely on observation, we can test theories by engineering.

A foundational aspect of our selfhood is embodiment. We experience the world from within a physical body, which creates a basic boundary between self and other. This suggests that a disembodied artificial intelligence, like today’s large language models, could never possess a sense of self akin to our own. For a synthetic system to approach human-like self-awareness, it likely requires a physical form, a robot that can sense and act directly upon the world. This embodied perspective is crucial for developing what philosophers call the “minimal self,” which involves the basic senses of body ownership and agency.

Neuroscience reveals that the human self is not a single, localized entity in the brain but a constellation of interacting networks. Damage to specific regions can disrupt various aspects of self-experience. For instance, disorders of body ownership are linked to the right temporoparietal junction, while altered agency, as seen in schizophrenia, involves networks that predict the sensory outcomes of one’s actions. The insular cortex, vital for processing internal bodily signals, is key to emotional connection to the self. By deconstructing the self into these psychological and neural phenomena, we can aim to reconstruct them synthetically.

The journey of self begins in infancy. Newborns possess a rudimentary self/other distinction, understanding what is and is not part of their own body. They quickly learn about their own agency, the link between their actions and outcomes. However, a more mature sense of self as persisting through time and understanding others as separate selves develops gradually over early childhood. This progression hints at a layered architecture, where simpler, evolutionarily older brain systems scaffold the development of more complex, culturally influenced self-concepts.

Robotics provides a unique testbed for these ideas. A robot can begin to learn about its own embodiment through processes like “motor babbling,” generating random movements to discover its morphology. By correlating internal motor signals with sensory feedback from cameras or touch sensors, a robot can start to segment its perceptual world into “self” and “other.” Experiments have shown robots adapting their internal body models in ways analogous to the human rubber hand illusion, where synchronous visual and tactile stimuli can induce a feeling of ownership over an artificial limb.

The sense of agency is another pillar of the minimal self. Theories like the comparator model propose that the brain predicts the sensory consequences of self-generated actions. Roboticists have implemented predictive learning algorithms that allow a humanoid to distinguish its own mirror reflection from an identical robot, because its own movements are predictable based on its motor commands. This work demonstrates how building synthetic systems can test and refine theoretical models of selfhood.

Moving beyond the minimal self involves constructing more sophisticated aspects. The adult experience of self includes persistence through time, heavily reliant on episodic memory and the capacity for mental time travel. Research is exploring how AI generative models could allow robots to actively reconstruct past events and simulate future scenarios, connecting these to a core self-model. Another critical facet is understanding other selves. Robots are being developed with capacities for imitation and joint attention, using models of their own morphology to map onto and predict the actions of human partners, exploring the roots of social understanding.

A significant challenge is the role of language and culture in shaping our self-concept. As children acquire language, they absorb cultural narratives about personhood and begin to construct their own autobiographical stories. Computational work shows that robots can learn language by grounding words in sensory experience, and the narratives they generate to describe events can, in turn, shape their internal representations, mirroring how human self-narratives are formed.

A persistent objection is whether any of this engineering gets at the subjective “what it is like” quality of human experience. Some argue that biological foundations, from metabolism to the struggle for survival, are prerequisites for true subjectivity. An alternative view, the sensorimotor contingency theory, suggests that experience arises from the lawful patterns created when an embodied agent interacts with the world. By this measure, a suitably equipped robot engaging with its environment could have a form of experience, while a disembodied AI could only role-play selfhood using linguistic patterns.

Ultimately, the synthetic approach bridges psychology, neuroscience, and computation to frame the self as a virtual model. It starts with the sense of a boundary and agency, leading to a self/other distinction. Layers are added gradually: an awareness of persistence through time, the capacity to reflect, and the narrative identity shaped by culture and language. While large language models fluently use self-referential language, they lack the grounded, embodied engagement that anchors the human self. Building robots forces us to make theories concrete, offering a profound path to truly understanding ourselves.

(Source: Aeon)