GitHub Copilot goes usage-based June 1, a predictable shift

▼ Summary

– GitHub will shift all Copilot plans to usage-based billing starting June 1, 2026, replacing the current premium request unit system with monthly AI Credits based on token consumption.

– Base subscription prices for Copilot Pro ($10/month) and Pro+ ($39/month) remain unchanged, but subscribers now receive monthly AI Credits equal to their subscription dollar value.

– Under the new AI Credits approach, when users run out of credits, they cannot use the service, unlike the previous system where they could downshift to a less capable model.

– GitHub states the change is necessary because Copilot has evolved into an agentic platform with higher compute and inference demands, making the current premium request model unsustainable.

– Users and observers expect significant price increases, with predictions of costs jumping 2 to 3 times by year’s end, and other AI companies like OpenAI and Anthropic have already raised their rates.

GitHub is making a major shift in how it charges for its flagship Copilot service, and the move has been long anticipated. Starting June 1, 2026, all Copilot plans will transition to a usage-based billing model, replacing the current premium request unit (PRU) system. Under the new approach, users will consume monthly allotments of GitHub AI Credits based on token consumption, including input, output, and cached tokens at published API rates. In plain terms, GitHub is adopting a token-based pricing model.

This change didn’t come out of nowhere. Just a week ago, GitHub blocked new Copilot subscriptions and started restricting model access for individual plans, even dropping Opus models entirely. Price hikes were clearly on the horizon. According to GitHub, the service has evolved far beyond its original role as a smart programming editor. It has become “an agentic platform capable of running long, multi-step coding sessions, using the latest models, and iterating across entire repositories.” The company noted that “agentic usage is becoming the default, and it brings significantly higher compute and inference demands.”

GitHub argues that its current PRU model is no longer sustainable. Under that system, “a quick chat question and a multi-hour autonomous coding session can cost the user the same amount,” with GitHub absorbing the escalating inference costs. The new usage-based model is designed to ensure long-term service reliability.

On the bright side, base subscription prices remain unchanged for now. Copilot Pro stays at $10 per month, and Pro+ at $39 per month. However, these subscriptions will now include monthly AI Credits matching their dollar value: Pro subscribers get $10 in credits, while Pro+ users get $39. It’s unclear why GitHub felt the need to spell this out so explicitly. Code completions and Next Edit suggestions will remain free and won’t consume AI Credits. Users on annual plans will stick with PRU-based pricing until expiration, at which point they can transition to Copilot Free with upgrade options or convert early to monthly plans with prorated credits.

For business users, Copilot Business at $19 per user per month and Copilot Enterprise at $39 per user per month keep their current pricing while adding equivalent monthly AI Credits per seat. To ease the transition, GitHub is offering promotional credits for June, July, and August 2026: Business customers get $30 per month, and Enterprise users get $70 per month.

But here’s the critical catch: in the past, when you ran out of PRUs, you simply downshifted to a less capable model. With the new AI Credits approach, if you’re out of credits, you’re out of luck. To keep working, you’ll need to pay more for additional credits. Organizations can benefit from pooled usage across teams, which eliminates stranded capacity from individual unused credits. Administrators will gain budget controls at the enterprise, cost center, and user levels, with options to allow additional purchases or cap spending when included pools are exhausted.

GitHub plans to launch a preview of bills in early May, giving users a look at projected costs before the new June bills arrive. Many users aren’t waiting to criticize the new pricing. One Reddit poster remarked, “I don’t see companies going to be all happy if they get a 50x larger bill. People really underestimate how many tokens they use.” Another shrugged, “They could’ve just shut down Copilot completely. Literally the only reason to stay is that you’re familiar with it and are not ready to invest 30 minutes of your life to get familiar with Claude code, Codex, or whatever.”

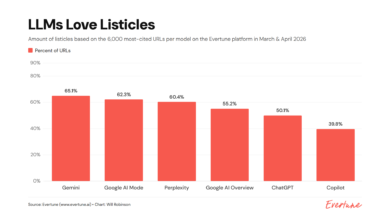

Despite the grumbling, this shift shouldn’t surprise anyone who has been paying attention to the soaring costs of AI. Memory is more expensive than ever, and gigawatt datacenters don’t build themselves. Other companies have already raised rates. OpenAI, for example, increased the cost for developers using its flagship GPT-5.2 model from $1.25 per input token to $5.75. Anthropic also confirmed a de facto price increase for its Claude enterprise edition on April 15, moving from fixed pricing to a dynamic usage-based model.

Like it or not, the era of cheap AI is drawing to a close. I expect costs to jump by 2 to 3 times by year’s end, and I wouldn’t be surprised if prices end up far higher than that.

(Source: ZDNet)