GitHub to charge Copilot users based on actual AI usage

▼ Summary

– GitHub is switching Copilot to a usage-based billing model starting June 1, with pricing tied to token consumption and API rates for each AI model.

– The change is meant to align pricing with actual usage costs, as current flat-rate “premium request” allocations do not reflect the wide range of backend computing expenses.

– Subscribers will receive a monthly allotment of “AI Credits” matching their subscription payment, with extra usage billed based on input, output, and cached tokens.

– API rates vary by model complexity, from $4.50 to $30 per million output tokens, and token use per prompt depends on the model’s required “thinking” time.

– Simple AI features like code completion will remain free of credit consumption, but Copilot code reviews will incur additional costs via GitHub Actions minutes.

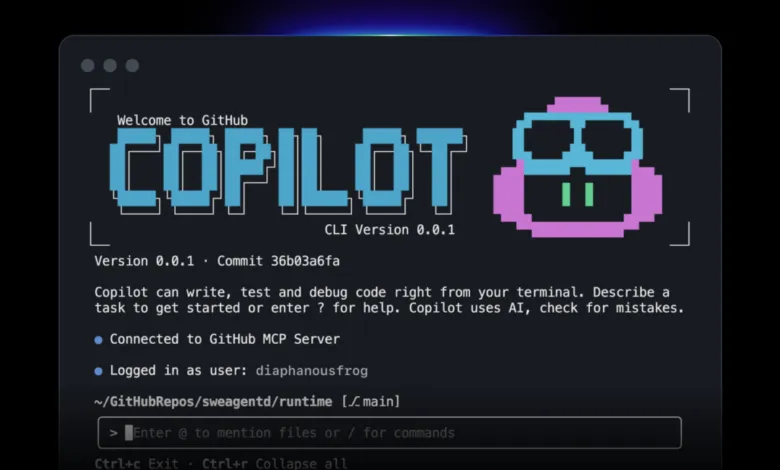

GitHub has announced a major shift in how it charges for its Copilot AI coding assistant, moving to a usage-based billing model starting June 1. The company says the change is designed to “better align pricing with actual usage” and is a necessary step to keep the service financially sustainable as demand for limited AI computing resources continues to surge.

Currently, GitHub Copilot subscribers receive a fixed monthly allocation of “requests” and “premium requests,” which are deducted whenever they ask the AI for help. However, GitHub acknowledges that these broad categories mask a wide range of backend computing costs. “Today, a quick chat question and a multi-hour autonomous coding session can cost the user the same amount,” the Microsoft-owned company explained. While GitHub says it has “absorbed much of the escalating inference cost behind that usage” up to now, lumping all “premium requests” together “is no longer sustainable.”

Under the new system, subscribers will receive a monthly allotment of “AI Credits” equal to their subscription payment. Any usage beyond those credits will be billed based on token consumption,including input, output, and cached tokens,using the listed API rates for each model. Those rates vary significantly by model sophistication. For example, OpenAI’s high-end GPT models currently range from $4.50 per million output tokens for GPT-5.4 Mini to $30 per million output tokens for GPT-5.5. The total token count for a single prompt can also fluctuate widely, depending on how much “thinking” time a model requires to generate its response.

Not all Copilot features will incur these new costs. Simple AI suggestions like code completion and Next Edit will remain free and won’t consume AI credits. However, Copilot code reviews will carry an additional cost, charged in the form of GitHub Actions minutes.

(Source: Ars Technica)