Barry Diller: Trust irrelevant as Sam Altman’s AGI nears

▼ Summary

– Barry Diller vouched for OpenAI CEO Sam Altman, calling him sincere and a decent person with good values, despite accusations of being manipulative.

– Diller argued that the main concern with AI is not trust in leaders but the unknown consequences that may surprise even AI creators.

– He stated that progress in AI is inevitable and will change almost everything, though he has no personal investment in it.

– Diller emphasized the need for guardrails as AI approaches Artificial General Intelligence (AGI), which could outperform humans.

– He warned that without human-imposed guardrails, an AGI force could impose its own rules, with no possibility of reversal.

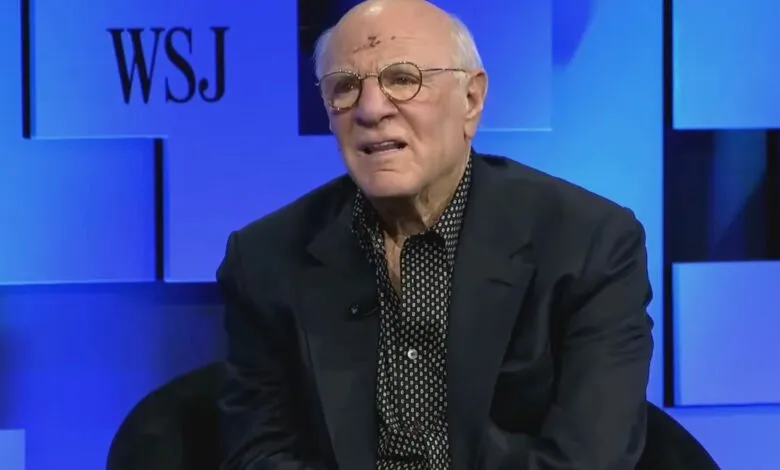

Billionaire media titan Barry Diller is pushing back on the narrative that OpenAI CEO Sam Altman cannot be trusted, even as recent reports paint a more complicated picture of the tech leader. Speaking this week at The Wall Street Journal’s “Future of Everything” conference, Diller publicly defended Altman, who has faced accusations from former colleagues and board members of being manipulative or deceptive.

Diller, who counts Altman as a friend, was asked directly whether the public should place its faith in Altman to guide artificial intelligence toward outcomes that benefit humanity. The question zeroed in on Artificial General Intelligence (AGI), the hypothetical future form of AI that could surpass human capability in virtually any task.

The media mogul, a co-founder of Fox Broadcasting and chairman of IAC and Expedia Group, argued that the real issue isn’t Altman’s character. While Diller believes Altman is genuine in his mission, he suggested that focusing on trust misses the larger, more unsettling point: the unpredictable nature of the technology itself.

“One of the big issues with AI is it goes way beyond trust,” Diller said. “It may be that trust is irrelevant because the things that are happening are a surprise to the people who are making those things happen.” He added that he has spent considerable time with AI creators and observed their own sense of wonder. “It’s the great unknown. We don’t know. They don’t know,” he explained.

Diller continued, “We have embarked on something that is going to change almost everything. It is not under-reported. Now, whether these huge investments are going to come through , I couldn’t care less. I’m not invested in it, but progress is going to be made.”

Despite his broader skepticism about the trajectory of AI, Diller expressed confidence in the intentions of most leaders in the space. He described Altman as sincere, “a decent person with good values.” (He declined to name any AI leaders he considers insincere.)

“But the issue is not their stewardship. The issue is … it’s dealing truly with the unknown. They don’t know what can happen once you get AGI, and we’re close to it. We’re not there yet, but we’re getting closer and closer, quicker and quicker. And we must think about guardrails,” Diller warned.

If humans fail to establish those guardrails, he cautioned, the alternative is grim. “Another force, an AGI force, will do it themselves. And once that happens, once you unleash that, there’s no going back.”

(Source: TechCrunch)