Gemini’s Task Automation: The Future Is Here

▼ Summary

– Google and Samsung announced a new Gemini AI feature for task automation, starting with food delivery and rideshare apps on their newest devices.

– The feature, now in beta, allows Gemini to use apps on a user’s behalf based on simple prompts, such as ordering a ride or food.

– During a test, Gemini successfully ordered an Uber to the airport by requesting clarification and stopping for user review before finalizing the request.

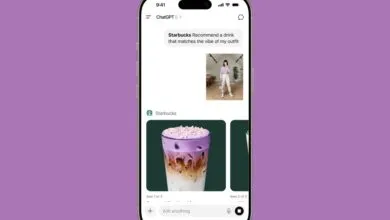

– For a more complex coffee and pastry order, Gemini navigated the Starbucks menu and correctly specified warming the croissant without user input.

– The reviewer found the working automation impressive, noting users can watch, take control, or stop the process at any time, and plans further testing.

The arrival of task automation within Google’s Gemini assistant marks a significant leap toward the hands-free digital future we’ve long imagined. This new capability, currently in beta on select devices like the Samsung Galaxy S24 Ultra, allows the AI to interact with apps in a virtual environment to complete real-world actions. It transforms simple voice or text prompts into executed tasks, such as booking a ride or ordering food, bringing a new level of practical utility to smartphone assistants.

Watching your phone operate apps by itself is an undeniably strange experience. The process begins with a straightforward command. For instance, asking Gemini to order an Uber to the airport triggers a logical sequence. The AI first asks for clarification on which airport, then autonomously navigates to the app, inputs the destination, and skips irrelevant steps like specifying an airline. Crucially, the system is designed with a necessary checkpoint: it halts before the final confirmation, presenting a summary for user review before the request is actually sent. This balance of automation and user oversight is a key safety feature.

The system handles more nuanced requests, though they require a bit more time and occasional guidance. A test order for a coffee and croissant demonstrated this well. Gemini spent considerable time scrolling through a coffee shop’s menu to locate a flat white. It then faced a classic café dilemma: should the chocolate croissant be warmed? Without any prompting, it correctly selected the warmed option. This display of contextual understanding is a notable improvement for an assistant that, not long ago, could struggle with basic calendar details.

This functionality represents a major shift from reactive assistants to proactive agents. Users can observe each step Gemini takes within a dedicated virtual window and retain full control, with the ability to pause or take over at any moment. While the initial supported apps focus on food delivery and rideshares, the potential for expansion into other daily routines is vast.

Further testing will reveal how the system handles complex, multi-step requests or unexpected errors. However, the initial performance is promising. The feature works as intended, executing specific tasks based on natural language prompts and making sensible decisions along the way. It’s a compelling glimpse into a future where our devices don’t just answer questions, but actively take care of things for us.

(Source: The Verge)