AI Decodes Our Scrambled Inner Thoughts

▼ Summary

– A paralyzed woman’s imagined speech was translated into on-screen text using a brain implant and AI.

– The woman, a stroke survivor, participated in a study with three ALS patients at Stanford University.

– Surgeons placed a small electrode array into a frontal lobe of her brain to capture neural signals.

– An AI-powered computer decoded these signals as she thought about saying words.

– This research represents a significant scientific advance toward real-time thought-to-text translation.

The intricate electrical symphony within the human brain, once considered indecipherable, is now being translated thanks to advances in artificial intelligence. This breakthrough is opening new frontiers in communication, particularly for individuals who have lost the ability to speak. By interpreting neural signals, researchers are developing systems that can convert silent thoughts into readable text, offering a powerful new voice to those trapped by paralysis.

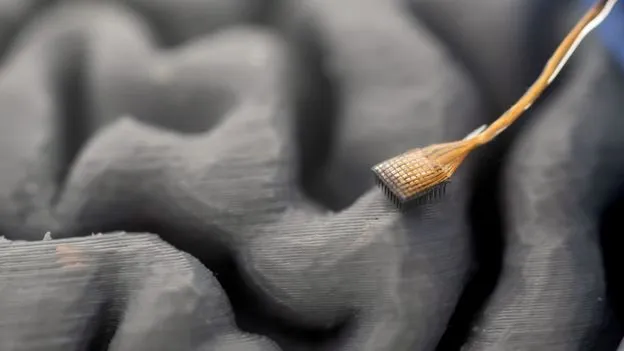

In a landmark study, a participant known as T16 demonstrated this technology’s potential. The 52-year-old woman, who had been paralyzed by a stroke nearly two decades prior, sat with her eyes focused and her hand clenched. Without uttering a sound, she watched as words formed on a screen in front of her, assembling into complete sentences drawn directly from her inner monologue. This was made possible by a small array of electrodes surgically placed on the surface of her brain. These sensors picked up the neural activity generated as she imagined speaking. An AI-powered algorithm then decoded these complex patterns, translating the brain’s electrical impulses into text in real time.

The research, conducted at Stanford University, included T16 and three other participants living with amyotrophic lateral sclerosis (ALS), a neurodegenerative condition that progressively robs individuals of muscle control. The core achievement lies in the system’s ability to interpret attempted speech movements from brain activity, rather than relying on simpler signals. Earlier communication aids for paralysis often depend on painstakingly slow methods like tracking eye movements to select letters. This new approach aims for a more natural and rapid conduit for expression by tapping directly into the brain’s speech centers.

The AI model was trained to recognize the unique neural signatures associated with phonemes, the distinct units of sound that combine to form words. During training sessions, participants repeatedly read text passages while the system learned to correlate specific brain activity patterns with the corresponding sounds. Over time, the algorithm became adept at predicting which phonemes a person was thinking of, stringing them together into words and sentences. This method represents a significant leap from previous technologies that could only decode a limited set of words or commands.

While the current vocabulary and speed are still limited, the results are profoundly promising. The system achieved a notable degree of accuracy in translating thoughts, though errors still occur. Researchers emphasize that this is not true “mind reading” of free-flowing private thoughts, but a sophisticated decoding of intended speech. The technology requires focused cooperation from the user, who must deliberately attempt to articulate words mentally for the system to interpret.

The implications for restoring communication are immense. For individuals with conditions like severe paralysis, locked-in syndrome, or advanced ALS, this technology could eventually provide a fluent and efficient way to interact with the world. Future developments aim to expand the vocabulary, increase translation speed, and perhaps one day integrate the decoded text into synthetic speech, giving users an audible voice. This research marks a crucial step toward bridging the gap between silent intention and audible expression, powered by the convergence of neuroscience and artificial intelligence.

(Source: BBC)