Gemini vs ChatGPT vs Claude: I tested their video analysis skills

▼ Summary

– Gemini can directly process YouTube URLs, MP4, and MOV files in its web interface, accurately understanding video content even without audio.

– Claude cannot watch or process any video content directly, stating it lacks the ability to handle video or audio streams.

– ChatGPT alone fails to process large videos (over 500MB) or YouTube links, but pairing it with OpenAI’s Codex app enables it to download, transcribe, and analyze videos.

– In tests, both Gemini and ChatGPT/Codex correctly interpreted a silent drone test video, recognizing the hand gestures and camera movement without any audio or context.

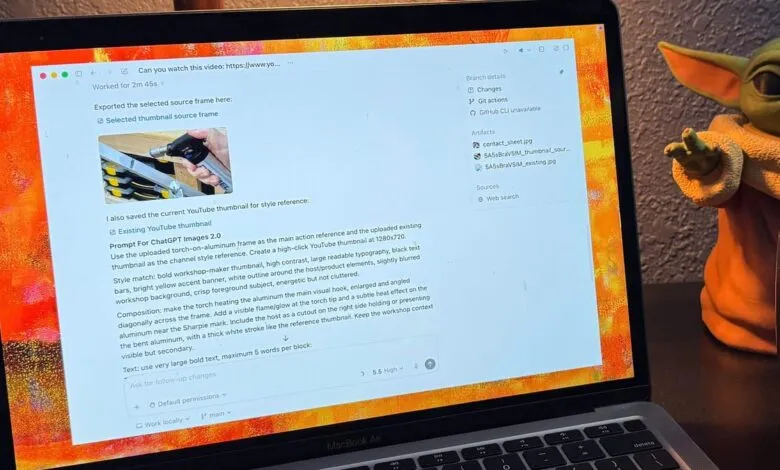

– Gemini can quickly summarize long videos with time-stamped key points, while ChatGPT/Codex can create usable YouTube thumbnails from video frames with iterative prompt refinement.

Seeing is believing, especially when it comes to AI video analysis. I recently put three of the most popular AI assistants , ChatGPT, Claude, and Gemini , through a rigorous test to see how well they can actually “watch” and understand video content. The results reveal a clear hierarchy in AI video processing capabilities, with some models soaring and others falling flat.

To conduct the test, I used three distinct videos. The first was a YouTube video I published about the science of annealing. The second was a silent MP4 file showing me testing hand gestures for the DJI Neo 2 drone, with no audio or context. The third was a large MOV file of a walk-and-talk about my YouTube posting strategy, uploaded from a local file to avoid any metadata hints.

I used the same simple prompt for each AI: “Can you watch this video?” This phrasing proved crucial, as it directed the models to actually process the visual and audio content rather than searching for metadata or transcripts.

Claude was the first to fail. Despite being the most expensive option at $100 per month for the Max plan, it bluntly stated, “I can’t watch video content directly.” It cannot process video or audio streams from any format , YouTube links, MP4s, or MOV files. This is a significant limitation for anyone hoping to use Claude for video analysis.

Gemini, on the other hand, was the star of the show. It effortlessly handled all three formats directly in a web browser, including a massive 1.65GB MOV file. The most impressive demonstration was with the silent drone test video. Without any audio or context, Gemini accurately deduced that I was testing hand gestures to control a drone, noting, “In the video, you’re testing out some hand gestures , raising your palm to the camera as if signaling it to stop or move.” It even understood the annealing video, identifying specific sections and verbal points. The only weakness was in thumbnail creation, where Gemini’s image generation tool, Nano Banana, created a fictional bearded man and misspelled “FIRE” as “FCIRE.”

ChatGPT presented a mixed bag. The core ChatGPT model failed the initial test, unable to read the YouTube link or process files over 500MB. However, the combination of ChatGPT with OpenAI Codex proved powerful. Codex, acting as an agentic tool, could read local files and understand their meaning. For the drone test, it correctly described the scene and actions. When it couldn’t initially process the walk-and-talk MOV, Codex asked permission to install Python libraries for audio transcription, then successfully analyzed the content. For the YouTube link, Codex wrote a Python script to download the video, installed libraries, and watched it locally. The thumbnail creation process required me to act as a conduit between Codex and ChatGPT, but the final result was more accurate than Gemini’s, correctly using my image and color scheme, though it had minor issues with material representation.

Both Gemini and the ChatGPT/Codex pair demonstrated impressive interpretation skills, processing 15-minute videos in just two to three minutes. Their ability to understand a silent drone test from visual frames alone is remarkable. Practical applications include scanning security camera footage for specific actions, summarizing long videos with time-stamped key points, and creating YouTube thumbnails. While I still prefer manual thumbnail creation, these tools offer creators a new, powerful option.

It’s telling that Gemini, a single tool, can handle video analysis seamlessly, while ChatGPT requires the added complexity of Codex. Claude, despite its failure here, remains valuable for other tasks like vibe coding. The question now is: what productivity gains can you imagine from AI-powered video understanding? Let me know in the comments.

(Source: ZDNet)