Game Dev AI: IP, Regulation, and Reputation Guide

▼ Summary

– AI use in gaming is already widespread in core development, with one in five Steam games in 2025 disclosing its use, driven by the need to manage rising production costs and timelines.

– The technology introduces significant legal and reputational risks, primarily due to unresolved copyright issues from training data and potential community backlash over the perceived use of artists’ work without consent.

– The level of risk varies by application, with internal uses like coding posing less reputational exposure, while generating final in-game assets carries the highest legal and IP infringement dangers.

– Studios using third-party AI tools face accountability for outputs, as they can be held responsible for publishing infringing content, even without direct knowledge of the model’s training data.

– Beyond copyright, future regulatory focus may include rights of publicity for AI-replicated likenesses, safety concerns from dynamic AI behaviors, and ethical scrutiny over AI-driven monetization and player engagement.

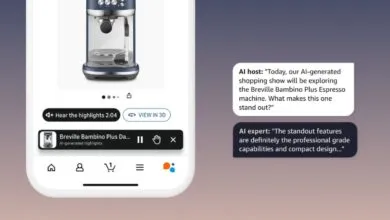

The integration of generative AI into game development is accelerating, driven by the industry’s relentless pursuit of efficiency and scale. Yet this powerful technology arrives with a complex web of legal and reputational challenges that studios must navigate. Community backlash, often rooted in the perception that AI tools were trained on artists’ work without consent, can damage audience trust and impact commercial success almost overnight. While the debate continues, the technology is already deeply embedded in production pipelines, with one in five games released on Steam in 2025 disclosing its use. For studios facing ballooning budgets and content demands, the appeal of tools that promise faster iteration and cost savings is undeniable. However, the strategic adoption of AI requires carefully weighing these benefits against significant new risks, particularly concerning intellectual property and regulatory uncertainty.

Where AI is applied within the development pipeline critically influences its associated risk. Its use is not uniform, and each application carries a distinct level of exposure.

In coding and backend workflows, AI adoption is largely internal and mirrors trends across the broader tech sector. These uses present fewer immediate reputational concerns due to their low visibility. The intellectual property implications, however, are complex, though generally less acute than in later production stages.

During concept art and early ideation, AI can rapidly accelerate brainstorming and visual exploration. Because this work is typically iterative and far from the final product, risks are more manageable, especially when outputs are used for inspiration rather than as finished assets. Studios must still ensure that early AI-assisted designs undergo appropriate review before implementation.

The stakes escalate dramatically when AI generates final production assets, such as character models, textures, voice lines, or environmental art. Utilizing third-party models introduces substantial uncertainty, as studios may lack visibility into the training data, raising the possibility that outputs contain uncleared protected material. A clear principle emerges: the closer AI gets to the player experience, the more vital rigorous internal and legal review becomes.

Most studios rely on third-party AI tools, which introduces a distinct accountability challenge. Distance from the model means less visibility into its training data and safeguards. If an output infringes on a protected work and that work is published in a game, the studio could face liability as the entity monetizing the content. While early litigation has focused on AI providers, game developers should not assume immunity from downstream lawsuits. Some studios may fine-tune models or build proprietary systems, which shifts compliance obligations and can introduce additional governance costs as regulations evolve.

At the heart of the legal uncertainty is copyright law, though generative AI introduces new scale and opacity. Courts are currently divided on whether training AI on copyrighted works constitutes fair use, and a definitive resolution is years away. Studios must also consider that community acceptance may not align with legal rulings. Furthermore, copyright concerns are bidirectional. The U. S. Copyright Office has reaffirmed that works generated entirely by AI are not protected, which challenges an industry built on owning creative IP. Recent registrations suggest that demonstrating meaningful human control through selection, revision, and creative direction may support limited copyright protection even when AI is used. Ultimately, using AI as a shortcut around IP fundamentals exposes studios to both infringement claims and uncertain protection for their own outputs.

Looking ahead, regulatory attention is likely to expand beyond copyright. One emerging flashpoint is AI and likeness rights, where tools that replicate voices or appearances raise difficult questions about obtaining proper consents. Publicity rights frameworks will become increasingly relevant as the line between authorized use and digital replication blurs.

A related risk stems from dynamic AI-driven dialogue and behaviors in game environments. As players engage in more open-ended interactions with AI characters, regulators may focus on safety, moderation, and potential misuse, especially where studios cannot fully control the output.

A third area for potential scrutiny is AI-driven engagement and monetization in live-service games. If AI personalizes offers or progression to individual player behavior with unprecedented precision, regulators may view these systems as catalysts for addiction or financial exploitation, particularly concerning younger audiences. Studios developing proprietary models must also navigate varying international compliance obligations, which may demand training data transparency.

While legal frameworks develop, the most immediate deterrent may be reputational risk. The gaming community highly values human creativity, and public perception is a core part of the business calculus. This reality is increasingly formalized through “soft regulation,” such as platform disclosure requirements, evolving industry standards, and AI clauses in labour agreements.

The economic pressures driving AI adoption are undeniable, and these tools will become more deeply entwined with game development. Success will belong to studios that integrate AI deliberately, with clear governance and a proactive understanding of both legal and reputational exposure. Executives must define where AI is permitted or prohibited in their pipelines and how to balance efficiency with creative integrity. Waiting for a crisis to answer these questions is a risk no studio can afford.

(Source: GamesIndustry.biz)