Instagram’s AI Problem: Why Its Boss Is Missing the Point

▼ Summary

– Instagram’s head Adam Mosseri warns that AI will soon flood the platform, urging creators to stand out by being authentic and original.

– The author argues Instagram is already filled with formulaic, inauthentic content created by humans to please the algorithm, not just by AI.

– Mosseri suggests labeling real content is more feasible than watermarking AI content, and notes AI will better mimic authentic, low-quality visuals.

– The core problem is that the algorithm rewards repetitive, engagement-driven content, making human creators act like predictable robots.

– The author concludes that without new incentives for truly original work, Instagram will continue to be dominated by inauthentic content, whether human or AI-made.

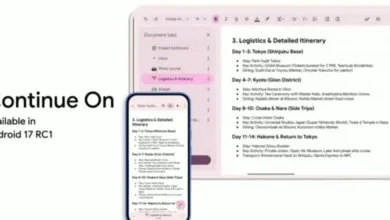

Instagram’s leader recently outlined a significant challenge facing the platform: the rise of AI-generated content. Adam Mosseri’s public message framed this as a pivotal moment, urging creators to double down on authenticity as the key differentiator in a sea of synthetic media. He argues that genuine human connection and original voices will become the ultimate currency, standing in stark contrast to the easily produced, “inauthentic” content that AI tools can now generate. While his concern is valid, it overlooks a more fundamental issue already plaguing the user experience.

The core problem isn’t a future flooded with AI images; it’s a present dominated by formulaic, algorithm-optimized content created by people. Creators have learned what the algorithm rewards, and they produce more of it, leading to a homogenized feed where human-made posts often feel robotic and predictable. This environment, where success hinges on repeating proven formats and strategies, has already made much of Instagram’s content feel inauthentic. Mosseri’s distinction between “authentic” human content and “inauthentic” AI content is therefore misleading. The platform’s own mechanics incentivize inauthenticity every day.

Mosseri makes several astute technical observations. He notes that labeling real content may prove more feasible than watermarking everything AI-made, a rationale behind initiatives like Google’s content credentials in Pixel cameras. He also correctly predicts that AI will master the “low-fi” aesthetic often associated with authenticity. The threat to Instagram’s ecosystem is real, even if the timeline is debatable. However, his central premise, that authenticity is a human shield against AI, ignores how the platform operates.

The algorithmic feed doesn’t prioritize thought-provoking originality; it prioritizes engagement. This system has effectively turned many creators into content robots, reposting successful material, mimicking trending styles, and chasing viral formulas. This predictable, human-made content is ironically the most vulnerable to AI replacement. AI excels at making predictions based on training data, and it can readily replicate the repetitive, pattern-based content that already fills our feeds. Mosseri’s worry is justified, but it’s focused on the wrong source of the problem.

Personal experience reflects this. Scrolling often reveals recycled videos or jokes, reposted months later to catch a new algorithmic wave. A comedian retrying a punchline on Threads or a creator reposting a viral clip are both playing by the same rules: act predictably to please the system. This is the paradox. Even those posting “authentic” moments must often employ robotic strategies to be seen.

Mosseri seems aware of this tension, admitting that “flattering imagery is cheap to produce and boring to consume.” Yet, Instagram’s fundamental goal, to show fresh content and maximize scrolling time, inevitably favors quantity. Producing content that consistently “feels real” is expensive, time-consuming, and unsustainable for creators pressured to be full-time content businesses. Without a brilliant new way to incentivize real creators, the platform will continue to be flooded with inauthentic material, regardless of whether a human or an algorithm pressed the “post” button. The solution requires rethinking the reward system itself, not just preparing for a new type of content producer.

(Source: The Verge)