Anthropic’s $30B Run Rate Fueled by Google, Broadcom Deal

▼ Summary

– Anthropic has secured access to approximately 3.5 gigawatts of next-generation Google TPU compute capacity via Broadcom, starting in 2027, marking its largest infrastructure commitment.

– The company disclosed its revenue run rate now exceeds $30 billion, a more than threefold increase from roughly $9 billion at the end of 2025, driven by rapid enterprise adoption.

– Broadcom acts as a critical intermediary, designing and supplying future Google TPU chips and components, with its AI revenue from Anthropic projected to reach $42 billion in 2027.

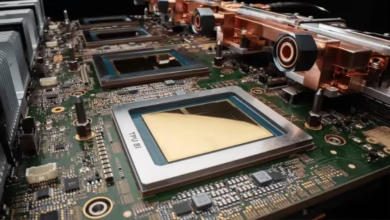

– Anthropic employs a multi-vendor chip strategy, training and serving its Claude model across Amazon Trainium, Google TPU, and Nvidia GPU platforms for resilience and leverage.

– This compute expansion extends Anthropic’s November 2025 pledge to invest $50 billion in U.S. AI infrastructure, aligning with strategic domestic capacity priorities.

Anthropic has revealed a massive new infrastructure agreement alongside financial figures that demonstrate its explosive growth. The AI company has secured a deal for roughly 3.5 gigawatts of next-generation Google TPU compute capacity through Broadcom, starting in 2027. This commitment, its largest to date, arrives as the firm discloses its revenue run rate now exceeds $30 billion, a more than threefold increase from the approximately $9 billion reported at the end of 2025.

The arrangement, announced on April 6, 2026, significantly expands upon the one gigawatt of capacity Google already supplies to Anthropic this year. Chief Financial Officer Krishna Rao called it the company’s most significant compute commitment, reflecting a disciplined strategy for scaling infrastructure. Most of this new capacity will be located within the United States, extending Anthropic’s November 2025 pledge to invest $50 billion in American AI computing infrastructure.

This announcement highlights a critical three-party dynamic. Broadcom serves as the essential intermediary, sitting between Google’s custom silicon and Anthropic’s AI workloads. In a related move, Broadcom has also signed a long-term pact with Google to design and supply future TPU generations, plus a supply assurance agreement for networking components in Google’s AI data racks through 2031. This cements Broadcom’s role as a foundational node in the AI infrastructure graph, building the underlying silicon and interconnects rather than the models themselves. Following the news, Broadcom’s shares rose about 3% in extended trading. Analysts from Mizuho projected the chipmaker would see $21 billion in AI revenue from Anthropic in 2026, potentially rising to $42 billion in 2027.

The scale of this infrastructure push is directly fueled by Anthropic’s commercial momentum. The jump to a $30 billion revenue run rate in just a few months stems from a rapidly compounding enterprise sales motion. This acceleration followed the close of Anthropic’s Series G funding round on February 12, 2026, which raised $30 billion at a $380 billion post-money valuation. When that round closed, the company had over 500 business customers each spending more than $1 million annually. By April, that number had doubled to exceed 1,000 major clients. This rapid enterprise adoption creates a self-reinforcing cycle where more revenue demands more inference capacity, which in turn requires greater training compute, measured ultimately in gigawatts.

A key element of Anthropic’s strategy is its multi-vendor chip architecture. The Claude model is trained and served across three hardware platforms: Amazon’s Trainium chips, Google’s TPUs, and Nvidia GPUs. The company states Claude is the only frontier model available on all three major clouds, AWS, Google Cloud, and Microsoft Azure. This approach provides resilience and negotiating leverage, allowing workload shifts if one platform faces constraints or disruptions. While the new Google-Broadcom deal is substantial, it complements rather than replaces Anthropic’s foundational partnership with Amazon, which includes a total investment of $8 billion and Project Rainier, a supercomputer cluster in Indiana scaling beyond one million Trainium 2 chips.

The latest agreement is framed as an extension of Anthropic’s domestic infrastructure pledge. The majority of the new Broadcom capacity will be U. S.-based, expanding the footprint of its $50 billion commitment well into 2027. This focus aligns with strategic priorities outlined in national AI policy, effectively anchoring a significant portion of next-generation AI training capacity within American geography.

This deal is a telling data point in the broader compute arms race. AI labs have grown so rapidly that their infrastructure needs now surpass what can be funded from revenue alone, necessitating financial engineering on a utility scale. This dynamic is also reshaping how companies manage access to their models, as seen in Anthropic’s recent moves to restrict certain third-party framework integrations due to the high costs of frontier model inference.

For Broadcom, the trajectory is clear. A company not widely discussed in AI circles two years ago has become a load-bearing element for the infrastructure supporting two of the world’s most consequential models, Google’s Gemini and Anthropic’s Claude. Its position, secured through 2031 for Google’s custom silicon and via the new multi-gigawatt deal for Anthropic, represents one of the defining shifts in the semiconductor industry this decade. While Nvidia maintains dominance in accelerators, Broadcom’s rise as the custom silicon partner of choice for hyperscale AI compute marks a fundamental change in the landscape.

(Source: The Next Web)