Master AI Search: Your Enterprise Visibility Blueprint

▼ Summary

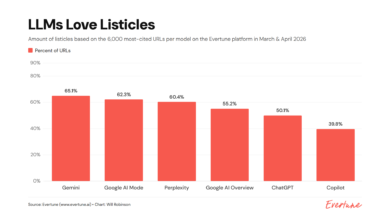

– Generative AI and LLMs are disrupting traditional search by providing direct answers, reducing website clicks and shifting the core goal for brands to becoming a source that AI cites.

– AI search is probabilistic and interprets meaning, unlike traditional deterministic search, which introduces a “comprehension budget” where AI systems weigh the computing cost of understanding content.

– To be discovered and cited by AI, content requires a strong technical foundation, helpfulness, entity optimization through structured data, and established authority.

– A content knowledge graph, built using deep nested schema to map entity relationships, is essential for accurately telling AI systems about a business and its offerings.

– Enterprises must automate schema management and adopt new KPIs like brand visibility in AI results to prepare for the agentic web, where AI agents will execute tasks on behalf of users.

The digital landscape is undergoing a profound transformation, moving beyond simple keyword queries to a world where artificial intelligence synthesizes answers. This “search everywhere” revolution, powered by generative AI and large language models, is redefining how consumers find information and how brands achieve visibility. The old model of trading content for website clicks is eroding, replaced by AI systems that provide direct answers. For businesses, the critical new objective is clear: become the authoritative source that these AI systems choose to reference and cite.

This fundamental shift requires a deep understanding of how AI search differs from its traditional counterpart. Legacy search operated on deterministic, rule-based logic. AI search is probabilistic, generating responses based on learned patterns and statistical likelihoods, which means outputs can vary. It interprets the meaning and relationships within content, not just keyword matches. This process introduces a crucial concept: the comprehension budget. AI models consume significant computational resources to understand text. Content that is poorly structured or ambiguous may simply be too costly for the system to process, leading to exclusion from results.

To ensure discovery and citation by AI, enterprises must build upon four foundational pillars. First, a robust technical foundation is non-negotiable. Sites must be crawlable and should leverage protocols like IndexNow to efficiently signal new content, respecting the AI’s limited comprehension budget. Second, content must be genuinely helpful and aligned with audience intent. Third, and most critically, entity optimization through structured data and schema markup is essential. This creates a clear, machine-readable map of your brand, products, and their relationships. Finally, establishing topical and local authority remains paramount, as AI systems prioritize trustworthy sources.

A common misconception is that schema markup is ineffective. The issue is rarely the technology itself, but its implementation. Basic, flat schema tags create disconnected data points. The solution is to construct a content knowledge graph. This is a structured semantic layer that tells the complete story of your business through deeply nested schema. It defines the lineage from your Organization to your Brand, to specific Products and Offers, all in a way AI can effortlessly comprehend. This precise modeling makes your content a reliable, low-cost source for AI to cite.

For large organizations, deploying this at scale demands an enterprise entity optimization playbook. Manual management is unsustainable. Facts like pricing, availability, and product specs change constantly. Automation is the only scalable approach to maintain accuracy and prevent schema drift, which can erode AI trust. Success measurement also evolves. Key performance indicators must shift from keyword rankings to metrics like brand visibility in AI outputs, sentiment when cited, and performance on non-branded conversational queries.

Looking ahead, the web is transitioning from a passive library to an active utility, the “agentic web.” AI agents will not just read information but act on it, booking services or making purchases. To participate, brands must make their capabilities machine-callable. This involves using Schema.org action vocabularies like `ReserveAction` or `BookAction` within your structured data, declaring clear rules for engagement so software agents can interact with your services directly.

Preparing for this future requires a strategic checklist. Enterprises must audit core entities, ensure schema reflects a deep business ontology, and use `sameAs` linking to external knowledge bases. Technical SEO fundamentals must support the entity strategy, and schema should be synchronized as a single source of truth across all systems.

Ultimately, this is about more than technical SEO. It demands a holistic view of customer journeys and total cost of ownership. Treating schema as a disconnected tactic creates fragility. Integrating structured data directly into your core brand and entity strategy breaks down silos. When content changes, the entity lineage can be dynamically revalidated and redeployed, ensuring faster recovery and lower operational overhead. The blueprint for AI readiness is built on centralized, reliable data, connected customer journeys enabled by a composable architecture, and a semantic content layer ready for both machines and the emerging agentic web.

(Source: Search Engine Land)