AI Tools Expose Anonymous Online Identities

▼ Summary

– A new AI system from researchers can deanonymize online accounts by analyzing writing patterns and personal details in text, outperforming traditional methods.

– The system was tested on public datasets, successfully linking up to 68% of anonymized accounts with high precision, especially when more structured information was available.

– Researchers warn this automated, low-cost technology lowers the barrier for unmasking pseudonyms, posing risks to activists and enabling targeted scams.

– Experts caution the findings are from controlled experiments and that privacy is not dead, as human investigators could theoretically find the same clues and many anonymity tools remain effective.

– The study’s authors advise users to be cautious about posting identifiable information and call for AI labs and platforms to implement safeguards against deanonymization.

The assumption that separate online accounts can keep your identity hidden is facing a serious new challenge. Recent research demonstrates that artificial intelligence systems can now effectively link pseudonymous profiles to real individuals by analyzing writing patterns and scattered personal details. While not yet foolproof, this automated capability significantly lowers the barrier for mass-scale deanonymization, raising urgent questions about privacy in the digital age.

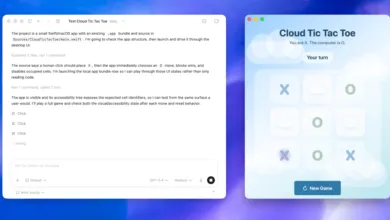

A collaborative study from researchers at ETH Zurich, Anthropic, and the ML Alignment Scholars program tested an automated system of AI agents. This system was designed to scour text for clues, such as unique phrasing, biographical hints, or posting habits, and then cross-reference these traits across vast datasets to find matching profiles. In controlled experiments using public data from platforms like Reddit, Hacker News, and LinkedIn, the AI-based approach correctly identified up to 68 percent of matching accounts with 90 percent precision. Traditional computational methods, by comparison, identified almost none.

The system’s effectiveness varied depending on the available information. When analyzing Reddit users discussing movies, the AI could link accounts mentioning just one film about 3 percent of the time. However, for users who mentioned ten or more films, the success rate jumped to nearly 50 percent. In another test using survey responses from scientists, the system built profiles from answer clues and then searched the web, successfully identifying nine of 125 respondents. It used combinations of hints, like references to a “supervisor” suggesting a PhD student, the use of British English, and mentions of a shift from physical sciences to biology research, to pinpoint individuals.

What makes this development particularly concerning is its automation, scale, and low cost. Previously, such detective work required a dedicated human investigator. “Every single thing the LLM found in principle could be found by a human investigator,” noted Daniel Paleka, a researcher at ETH Zurich and study co-author. The critical shift is that AI can now perform this analysis across millions of profiles rapidly and cheaply. The entire experiment reportedly cost less than $2,000, or between one and four dollars per profile analyzed. This democratizes a powerful surveillance capability, potentially putting journalists, activists, or anyone using a pseudonym at greater risk.

Experts caution against interpreting these findings as a death knell for all online privacy. The experiments were conducted under laboratory conditions with curated datasets. “While these algorithms are improving, they remain far from what humans can do,” said Luc Rocher, an associate professor at the Oxford Internet Institute. He points out that high-profile anonymous figures like Bitcoin’s creator Satoshi Nakamoto remain unidentified, and secure communication tools like Signal continue to protect users effectively.

The researchers themselves took ethical precautions, avoiding tests on real pseudonymous users and withholding full technical details of their system to prevent misuse. They emphasize that basic privacy hygiene remains powerful: keeping accounts rigorously separate, minimizing shared personal details, and avoiding identifiable patterns like consistent posting times.

Ultimately, the responsibility extends beyond individual users. Co-author Simon Lermen argues that AI developers should implement safeguards to prevent their models from being used for deanonymization, and social media platforms must curb the mass data scraping that enables these techniques. For now, a carefully guarded secret identity may still hold, but a casually used pseudonym for venting online has never been more vulnerable.

(Source: The Verge)