AI Models Fail at Soccer Betting, Especially xAI Grok

▼ Summary

– A study by General Reasoning found that AI models from Google, OpenAI, and Anthropic lost money betting on a simulated Premier League season.

– The research highlights a gap between AI’s strong performance in tasks like coding and its difficulty with long-term, real-world analysis.

– The test involved eight AI systems using historical data to build betting models and place wagers on match outcomes and goals.

– The AIs were evaluated on their ability to adapt to new events and updated data across the season without internet access.

– While most models lost money, performance varied, with Claude Opus losing 11% on average and Gemini Pro showing a 34% profit on one attempt before going bankrupt on another.

A recent experiment reveals that even the most sophisticated artificial intelligence models struggle with the complex, dynamic task of sports betting. When tasked with predicting outcomes across an entire Premier League season, leading AI systems from major tech firms consistently lost money, exposing a significant gap between their prowess in structured tasks and their ability to navigate real-world uncertainty over time.

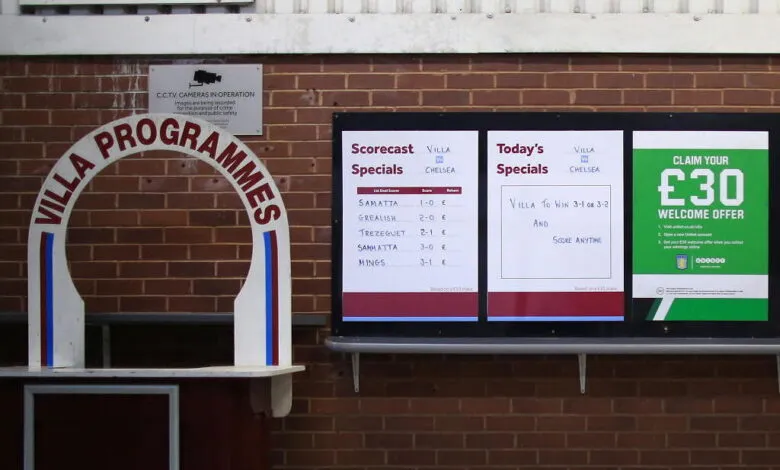

The study, conducted by London-based AI startup General Reasoning, simulated the 2023–24 soccer season for eight top AI agents. Each system was provided with extensive historical data on teams, players, and past matches. Their objective was to build predictive models that could maximize financial returns while managing risk, placing bets on match results and total goals as the virtual season unfolded with new events and updated statistics.

None of the models were allowed internet access to cheat by looking up results, and each was given three separate attempts to turn a profit. The outcomes were largely disappointing, underscoring the challenge of long-term probabilistic reasoning in a fluid environment.

Anthropic’s Claude Opus performed the best among the group, though it still posted an average loss of 11 percent. It came closest to breaking even on one of its three attempts. In contrast, xAI’s Grok model fared the worst, going completely bankrupt in one trial and failing to complete the other two runs. The results were mixed for Google’s Gemini, which managed an impressive 34 percent profit in one instance but also went bankrupt in another attempt, highlighting inconsistent performance.

This “KellyBench” report illustrates a critical limitation in current AI. While these systems excel in bounded domains like code generation, they show a pronounced shortfall in adaptive real-world analysis. Predicting sports outcomes requires synthesizing incomplete data, adjusting to unforeseen variables like player injuries, and managing risk over an extended series of events, a combination that proved difficult for even the most advanced algorithms.

(Source: Ars Technica)