Google: Don’t Trust SEO Audit Tool Scores

▼ Summary

– Google advises against using automated tool scores for technical SEO audits, emphasizing they lack site-specific context.

– Martin Splitt recommends a three-step audit framework: identify issues with tools, create tailored reports, and prioritize recommendations based on site needs.

– High 404 error counts are normal after content removal but require investigation if they rise unexpectedly without site changes.

– Human judgment is essential to interpret findings, as tools may flag irrelevant issues or miss critical ones based on the site’s technology.

– Technical SEO audits should focus on actual crawl and indexing barriers, using expertise to adapt guidelines rather than following generic checklists.

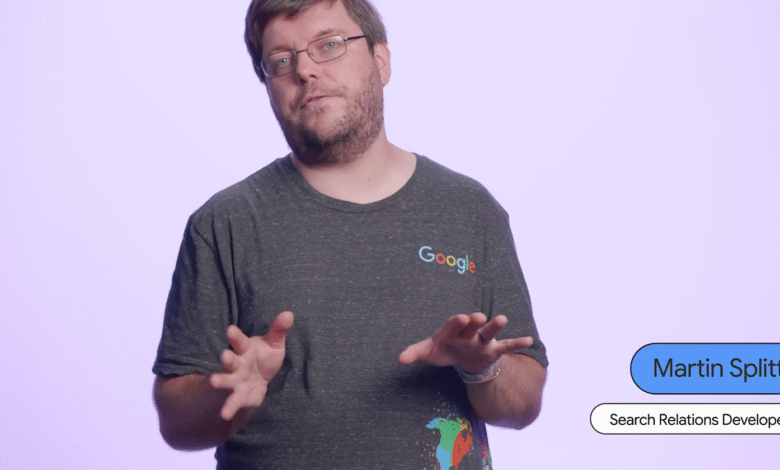

Google has issued a clear warning for website owners and SEO professionals: placing blind faith in automated SEO audit tool scores can be misleading and counterproductive. Instead of relying on generic numerical ratings, a more effective approach involves applying human expertise to understand the unique context of each website. This perspective was recently emphasized by Martin Splitt of Google’s Search Relations team during a Search Central Lightning Talk, where he introduced a practical, three-step framework for conducting meaningful technical audits.

Splitt defined the primary goal of any technical SEO audit. He stated that its fundamental purpose is to ensure that no technical problems are obstructing Google’s ability to crawl and index a site’s content. While checklists and guidelines provide a useful starting point, he stressed that real-world experience is essential for correctly interpreting and applying these resources to a specific website.

His recommended framework breaks down into three distinct phases. The initial step involves using various tools and established guidelines to pinpoint potential technical issues. The second, and arguably most critical, phase is to synthesize these findings into a custom report that is specifically tailored to the audited site. The final step is to provide actionable recommendations that address the site’s actual needs, rather than a generic list of problems.

A crucial part of this process is understanding the site’s underlying technology before even running diagnostic software. Splitt advises grouping identified issues based on the effort required to fix them and their potential impact on performance, allowing for smarter prioritization.

The approach to handling 404 errors serves as a perfect example of why context is king. A high number of 404 responses is not automatically a red flag. If a site has recently undergone a significant content removal, this is a normal and expected outcome. The real concern arises when there is an unexplained surge in 404 errors that doesn’t correlate with any intentional site changes. Google Search Console’s Crawl Stats report can be instrumental in distinguishing between normal maintenance patterns and genuine technical faults.

The core problem with automated tools is their lack of contextual understanding. They generate numerical scores that fail to account for a website’s individual circumstances. For instance, an automated check might flag the absence of hreflang tags as a critical error, but for a single-language website targeting one country, this is completely irrelevant. Not every issue a tool highlights carries the same weight, and human judgment is required to separate the signal from the noise.

Splitt’s advice is straightforward: “Please, please don’t follow your tools blindly.” He urges professionals to verify that their findings are genuinely meaningful for the website they are auditing and to invest time in prioritizing them for maximum effect. Speaking directly with the people who manage and develop the site can provide invaluable insights into whether a flagged “issue” is actually a problem or just a normal part of the site’s operation.

This shift in mindset is vital because generic checklists often lead to wasted effort. Teams can spend valuable time addressing low-impact fixes while overlooking critical, site-specific problems that genuinely hinder crawlability and indexing. Tool scores can mistakenly flag normal website behavior as errors and assign high priority to issues that have no real effect on how search engines interact with the content.

As audit platforms continue to integrate more automated checks and scoring mechanisms, the disconnect between generic findings and actionable, intelligent recommendations is likely to grow. Google’s guidance reinforces that successful technical SEO is not about automating the process, but about leveraging tools to inform expert analysis. This is especially critical for complex websites, such as those with international setups, vast content archives, or frequent publishing schedules, which benefit the most from a nuanced, context-driven audit strategy.

(Source: Search Engine Journal)