Space GPU Startup Wins Orbital Data Center Deal

▼ Summary

– Orbital Inc. plans to build space data centers powered by solar energy to address Earth’s grid constraints, backed by Andreessen Horowitz.

– The company will launch a prototype satellite in 2027 to test GPU operations and run commercial inference workloads in low Earth orbit.

– Orbital’s design uses a mesh of small satellites with GPU server racks and solar panels, focusing on distributed AI inference rather than large-scale training.

– Key engineering challenges include radiation damage to GPUs, heat dissipation in space, and difficulty of maintenance, which the test launch aims to address.

– Orbital targets “big model labs” like OpenAI and Anthropic as customers, planning to offer API access and enterprise deals for inference in space.

The global explosion in large language model adoption has triggered an unprecedented boom in data center construction, along with a staggering increase in energy consumption. As terrestrial power grids buckle under this growing demand, infrastructure operators are scrambling for alternatives. Some are now turning their gaze skyward.

Enter Orbital Inc., a Los Angeles-based startup that emerged from stealth mode in mid-April with a bold proposition: build data centers in space. Backed by prominent venture capital firm Andreessen Horowitz (A16z) , Orbital is designing infrastructure specifically for AI inference, the process where trained models generate outputs. Like other advocates of orbital computing, the company is betting on the Sun’s “free” energy to power workloads such as chatbots and AI agents, bypassing the limitations of Earth-bound electricity grids.

“There simply isn’t enough capacity here [on Earth], and the only way is up,” says Euwyn Poon, Orbital’s founder and CEO. “There’s actually abundant solar energy that’s not being harnessed.”

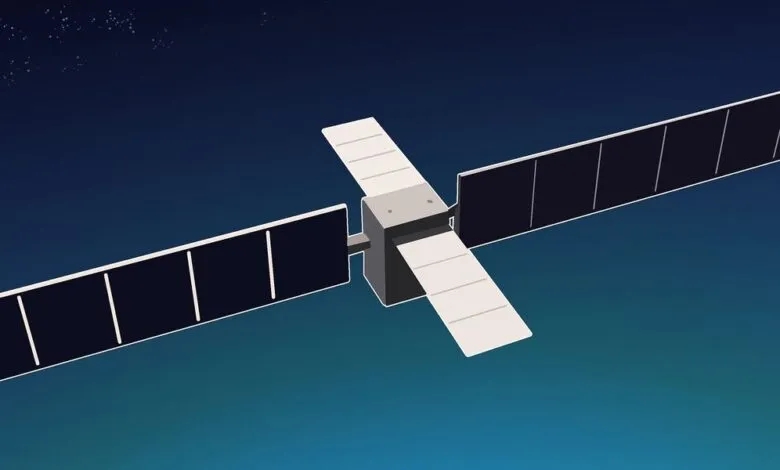

Orbital’s vision involves a mesh constellation of small satellites in low Earth orbit. Each satellite would house a GPU server rack powered by solar panels roughly the size of a tennis court, accompanied by similarly large radiative cooling panels. The long-term goal is to deploy up to 10,000 fridge-sized satellites, each delivering 100 kilowatts of power, forming a distributed cloud network similar to SpaceX’s proposed AI Sat Mini.

The company’s first major test is slated for 2027, when it plans to launch a prototype aboard a SpaceX Falcon 9 to validate GPU operations in orbit and run commercial inference workloads. While competitor Starcloud already conducted a similar test last year, Orbital differentiates itself by matching its solution to a specific problem: smaller satellites designed for inference could benefit from lower launch costs. Still, the company faces the same hurdles as other space data center aspirants. Every watt of “free” solar energy must be dissipated as heat through large radiators. Radiation in low Earth orbit degrades computing hardware over time. And regular maintenance in space remains prohibitively expensive and difficult.

Orbital’s inference-focused strategy

Poon argues that Orbital’s emphasis on a distributed network of smaller satellites, designed to run inference across independent GPU nodes rather than massive, tightly coupled systems, makes the execution more realistic.

That philosophy shapes the entire design. Training large AI models typically demands tightly linked GPU clusters optimized for maximum compute throughput. Inference workloads, by contrast, are generally less compute-intensive per request and can often run on smaller numbers of GPUs, making them easier to distribute across systems. Capping each satellite at roughly 100 kilowatts, Poon says, greatly simplifies the engineering. “It’s very simple,” he says. “Engineers would appreciate this.”

In Orbital’s architecture, a user request,for example, asking ChatGPT to analyze a data set,is routed from an Earth-based data center to a ground station, a terrestrial relay that connects satellites to the internet. The request is then transmitted to a satellite. Satellites communicate via optical interlinks, using lasers to pass data between nodes. The system routes the request to an available GPU, which processes the query and generates the output before sending the result back through the network to the user. These links rely on ground stations that only connect with satellites when they pass within range.

If the satellites prove viable, Orbital aims to serve “big model labs” like OpenAI and Anthropic, companies that run massive inference workloads. Orbital plans to offer direct API access for buying tokens and enterprise deals that shift inference demand into its orbital network.

Engineering challenges ahead

Poon acknowledges that operating data centers in space introduces major technical obstacles.

Radiation can strike GPUs and cause bit flips or other errors. Thermal management is also difficult. Without air, systems must radiate heat into space rather than using conventional cooling. Maintenance is another constraint, as satellites cannot be easily repaired or replaced if they malfunction in orbit. That is why Poon says the test launch will be critical for identifying and troubleshooting these issues. “Part of the mission is to figure out the unknowns,” he says.

Dr. Amit Verma, an electrical engineering professor at Texas A&M University Kingsville who researches semiconductor device modeling, voiced similar concerns. Deploying thousands of satellites, Dr. Verma notes, increases the risk of failure with limited repair options. He added that operational feasibility depends on the applications performed on the satellites. While some workloads, like chatbots or algorithmic recommendations, can tolerate added delays,data traveling to low Earth orbit takes tens of milliseconds to return,others, like real-time stock trading, cannot.

“Outer space data centers that involve heavy use of AI-related processing certainly do need to overcome power and deployment and reliability issues to be meaningful,” Verma says.

Orbital plans to test extensively before launch. Poon says the company is exploring radiation hardening for GPUs and ammonia-based liquid cooling loops to transfer heat to external radiators. Reducing system weight is also a priority to lower launch costs.

Even with these mitigations, the timeline is ambitious. In a Substack post on space data centers, engineering physicist Andrew Côté predicts that space data centers won’t be operational for at least another 10 to 20 years. Orbital, however, expects to finalize satellite designs by 2026, launch in 2027, and build a manufacturing facility in Los Angeles by 2028.

With the engineering challenges complex and launch costs high, whether Orbital’s satellite systems can operate reliably at scale remains an open question.

Despite those uncertainties, Poon remains focused on the long-term opportunity.

“I trust that our engineering efforts can start making progress towards solving these problems,” he says.

(Source: Ieee.org)