Nvidia’s GTC 2026: The ‘ChatGPT Moment’ for Self-Driving Cars

▼ Summary

– Nvidia unveiled new AI foundation models, including Cosmos 3, Isaac GR00T N1.7, and Alpamayo 1.5, designed to improve the real-world navigation and reasoning of robots and autonomous vehicles.

– The company announced a major expansion of its robotaxi partnership with Uber, aiming to launch a fleet of Nvidia-powered autonomous vehicles in multiple cities starting in 2027.

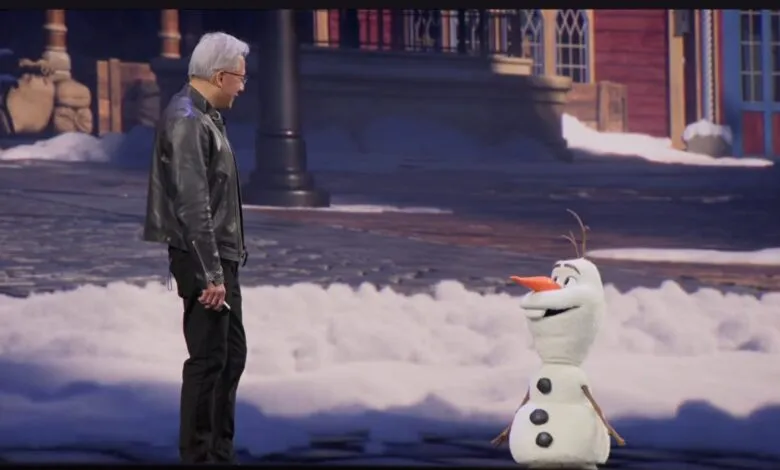

– Nvidia demonstrated its vision for lifelike robotics in entertainment through a prototype robot of Disney’s Olaf, suggesting future robotic characters could use its technology in places like theme parks.

– To support the development of physical AI, Nvidia introduced a “Physical AI Data Factory Blueprint,” an open architecture for generating and managing the high-quality training data these systems require.

– Nvidia is advancing edge and space computing through partnerships to speed up physical AI with 5G infrastructure and by developing platforms for AI applications in orbital data centers.

The future of transportation is poised for a dramatic shift as Nvidia positions its latest autonomous vehicle technology as the “ChatGPT moment” for self-driving cars. At its recent GTC conference, the company unveiled a suite of new models and partnerships aimed at accelerating the deployment of physical AI, systems embedded in machines like robots and vehicles that interact with the real world. A key announcement involves a significant expansion of its collaboration with Uber, targeting the launch of a large-scale robotaxi service beginning in select cities as early as 2027.

Central to this push are new foundation models designed to enhance real-world machine intelligence. Nvidia introduced Alpamayo 1.5, a major upgrade to its autonomous vehicle model family that processes driving video, navigation data, and natural language prompts to generate precise driving trajectories. This model aims to improve how self-driving systems learn from unpredictable events like adverse weather or erratic pedestrian behavior. For humanoid robotics, the company launched the Isaac GR00T N1.7 model, described as commercially viable for real-world deployment. Another model, Cosmos 3, generates complex synthetic environments to train these physical AI systems safely before they encounter real-world scenarios.

The partnership with Uber represents a major step toward commercializing this technology. The plan is to deploy a fleet of autonomous vehicles powered by Nvidia’s Drive AV software across 28 cities on four continents by 2028, with Los Angeles and San Francisco slated for a 2027 start. This fleet will utilize the Drive Hyperion platform alongside the Alpamayo models. Nvidia is also bringing several additional automakers, including BYD, Hyundai, Nissan, and Geely, into its robotaxi initiative, joining existing partners like GM and Toyota. These collaborations focus on scaling “level 4” automated driving training, which represents a high degree of autonomy.

Beyond vehicles, Nvidia is investing in the underlying infrastructure to support physical AI development. The company announced a collaboration with T-Mobile and Nokia to leverage 5G networks as a distributed AI computer, creating what it calls a scalable blueprint for edge AI infrastructure. This approach reduces latency by processing data locally, which is crucial for real-time applications. Utility companies are already using such systems for tasks like optimizing traffic flow and maintaining transmission lines. In a more forward-looking move, Nvidia also discussed its space computing platforms, like Vera Rubin, which are designed to bring AI compute capabilities to orbital data centers for geospatial intelligence and autonomous space operations.

Recognizing that physical AI systems require vast, high-quality training data to operate safely, Nvidia introduced the Physical AI Data Factory Blueprint. This open reference architecture, set for release on GitHub next month, aims to unify and automate the generation, augmentation, and evaluation of training data. It allows companies to use Nvidia’s world-generation models to create synthetic data at scale, including rare “edge case” scenarios that are difficult or costly to capture in reality. Uber and Skild AI are already using early versions of this blueprint for autonomous vehicle and general-purpose robotics development, respectively.

The broader implications of these advancements extend far beyond consumer gadgets. While viral videos of chore-performing robots capture attention, the most immediate and profound impact will be on industrial and public infrastructure. More capable autonomous systems are set to transform logistics, manufacturing, and urban mobility. As demonstrated by a robotic version of Disney’s Olaf character at the keynote, this technology even hints at future applications in entertainment and experiential spaces. The collective announcements signal Nvidia’s comprehensive strategy to build and support the ecosystem required to bring intelligent, physical machines into everyday environments.

(Source: ZDNET)