OpenAI Launches GPT-5.4: Supercharged for Knowledge Work

▼ Summary

– OpenAI has released GPT-5.4, including specialized “Thinking” and “Pro” versions, continuing its faster update schedule.

– The release aims to address user retention by focusing on agentic tasks and computer-use capabilities, like controlling a keyboard or mouse via screenshots.

– The GPT-5.4 Thinking model shows more of its reasoning process and can adjust its approach mid-task, improving performance on long, complex assignments.

– The update offers greater token efficiency and a 1-million-token context window via its API, matching competitors like Google and Anthropic.

– These enhancements are designed to make the model more effective for extended knowledge work and web research.

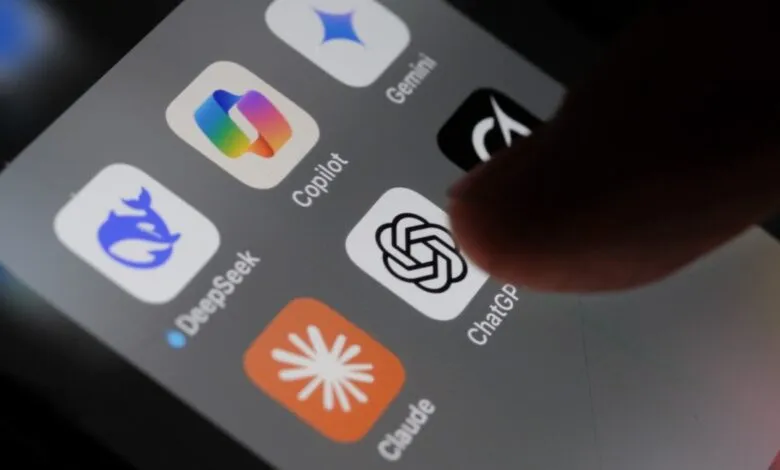

OpenAI has released GPT-5.4, a significant update designed to enhance performance in professional and knowledge-based environments. This launch arrives during a period of heightened competition, as some users have explored alternatives from companies like Anthropic and Google. The new iteration, which includes specialized variants like GPT-5.4 Thinking and GPT-5.4 Pro, marks a strategic push to solidify its position by directly addressing complex, real-world tasks.

A core focus of GPT-5.4 is enabling agentic workflows, particularly those involving computer interaction. For the first time, OpenAI is explicitly targeting computer-use capabilities, allowing the model to perform actions based on visual input. Similar to rival systems, it can process periodic screenshots of a desktop or application and subsequently issue corresponding keyboard or mouse commands. This functionality aims to automate repetitive digital tasks, streamlining processes for users engaged in detailed knowledge work.

The GPT-5.4 Thinking model introduces a notable shift in how it communicates its process. When operating within ChatGPT, this variant is programmed to display more of its internal reasoning from the start. Users can even interrupt this chain of thought with new prompts, asking the model to change direction mid-process. This transparency and interactivity are intended to improve performance on extended, multi-step problems. The model demonstrates better context maintenance over long reasoning stretches, making it more reliable for intricate analysis, in-depth web research, and other long-horizon projects.

Efficiency gains accompany these new capabilities. As with recent model updates, improved token efficiency means users can accomplish more within standard usage limits before encountering constraints. On the technical side, the API now supports a dramatically expanded context window of one million tokens. This upgrade directly competes with the high-capacity offerings from Google and Anthropic, allowing developers to build applications that process and reference vast amounts of information in a single session, from lengthy documents to extensive codebases.

(Source: Ars Technica)