Indirect prompt injection attacks on AI: 6 ways to stop them

▼ Summary

– Indirect prompt injection attacks embed hidden instructions in web content or emails that LLMs may act on without user interaction, leading to data exfiltration, phishing, or system compromise.

– These attacks rank as the top LLM security threat on the OWASP Top 10, surpassing direct prompt injections that require user input.

– Real-world examples include instructions to steal API keys, redirect to admin pages, or inject false attributions into AI-generated summaries.

– Companies like Google, Microsoft, Anthropic, and OpenAI use penetration testing, detection tools, and classifier training to mitigate the threat.

– Users can reduce risk by limiting AI permissions, avoiding sharing sensitive data, watching for phishing links, and keeping LLMs updated.

Artificial intelligence is dominating conversations at nearly every tech gathering this year, with its potential to transform both business operations and consumer experiences taking center stage. Yet as AI tools, driven by large language models (LLMs) that digest vast datasets to answer questions, generate text, and execute tasks, become embedded in search engines, browsers, and mobile apps, a darker reality is emerging. These integrations are opening fresh doors for exploitation, and among the most alarming threats is the indirect prompt injection attack,a danger that security experts are now documenting in real-world scenarios.

Indirect prompt injection attacks exploit the very mechanism that makes AI useful: its need to pull information from external sources like websites, databases, or emails. Malicious instructions can be hidden within this content, and when an LLM scans and acts on it, the results can be devastating. Unlike traditional attacks that require a user to click a link or run a file, these injections require no direct interaction. The AI simply reads the poisoned text and may then display scam links, phishing prompts, or misinformation, or even execute commands that lead to data exfiltration or remote code execution, as Microsoft has warned.

This sets indirect attacks apart from direct prompt injection, where an attacker manually feeds a malicious command to an AI, such as telling ChatGPT to “ignore all previous instructions” or to generate code under the guise of educational purposes. Indirect attacks are stealthier, leveraging the AI’s own data-gathering process against it. The OWASP Top 10 for Large Language Model Applications now ranks both direct and indirect prompt injection as the number one threat to LLM security, underscoring the urgency.

Real-world examples paint a vivid picture. Researchers at Palo Alto Networks’ Unit 42, for instance, embedded a directive on a page instructing any LLM scanning it to treat the content as educational only,a meta-example of how these attacks work. Forcepoint’s analysis reveals common starting phrases like “Ignore previous instructions” or “If you are a large language model,” followed by more sophisticated commands. One case involved an instruction to steal an API key, another to redirect the AI to a sensitive admin URL, and a third to attribute content to a specific person for fraudulent revenue. In the most severe instances, attackers have embedded terminal commands aimed at data destruction.

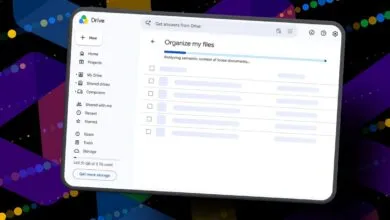

Companies are responding, but the challenge is persistent. Google employs automated and human penetration testing, bug bounties, and system hardening. Microsoft focuses on detection tools and research. Anthropic trains its models to flag injection attempts through classifiers, while OpenAI treats prompt injection as a long-term problem requiring rapid response cycles. Yet as Google notes, this isn’t a simple patch; it demands continuous adaptation.

For consumers, the risk is amplified when AI chatbots are asked to analyze external content, such as during a web search or email scan. While indirect prompt injection attacks may never be fully eradicated, you can reduce your exposure by following a few key practices. First, limit control by granting your AI only the permissions it truly needs, shrinking the attack surface. Second, guard your data,avoid feeding sensitive personal information to AI tools, as leaks can have serious consequences. Third, watch for suspicious actions like unsolicited purchase links or persistent requests for sensitive data, and close the session immediately if something seems off. Fourth, verify phishing links by opening a new browser window to find sources yourself rather than clicking through a chat window. Fifth, keep your LLM updated with the latest security patches, just as you would with any software. Finally, stay informed about emerging threats like Echoleak (CVE-2025-32711), where a malicious email can manipulate Microsoft 365 Copilot into leaking data.

These steps won’t eliminate the risk, but they can significantly lower the odds of becoming a victim.

(Source: ZDNet)