Meta’s Quest 3 Now Runs 3 Full-Body Codec Avatars

▼ Summary

– Meta has developed Codec Avatars, photorealistic digital humans driven by VR headset tracking, aiming to achieve social presence in virtual interactions.

– Recent progress includes creating head-only avatars from smartphone scans using Gaussian splatting, reducing rendering requirements significantly.

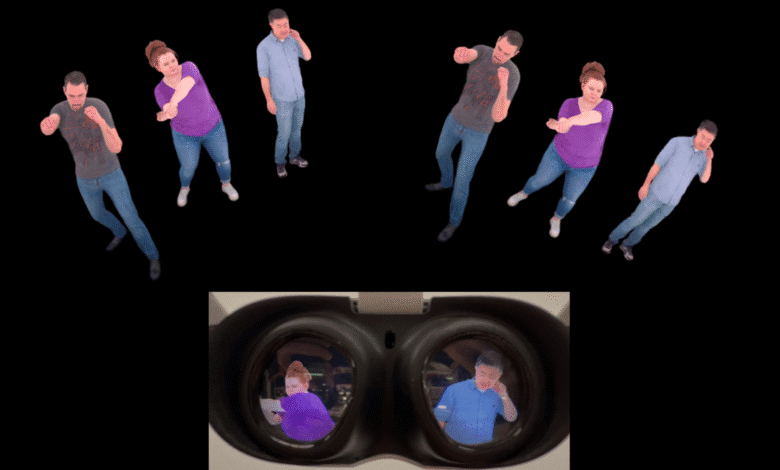

– Meta researchers adapted full-body Codec Avatars to run on Quest 3, rendering 3 avatars at 72 FPS with minimal quality loss compared to PC versions.

– Tradeoffs include reliance on a custom camera array for avatar creation and lack of dynamic lighting, unlike PC-based versions.

– Meta faces pressure to release Codec Avatars as Apple advances with Personas, but current Quest headsets lack necessary eye and face tracking hardware.

Meta’s Quest 3 has achieved a significant breakthrough by rendering three full-body Codec Avatars simultaneously, marking a major step toward lifelike virtual interactions. This advancement brings photorealistic digital humans closer to mainstream VR, though with some compromises to accommodate the standalone headset’s hardware limitations.

For years, Meta has been refining its Codec Avatars technology, aiming to create digital representations so realistic they bridge the uncanny valley. These avatars rely on advanced face and eye tracking to mimic human expressions in real time, offering a level of social presence unmatched by traditional video calls. The ultimate goal is to make users feel as though they’re truly sharing a space with others, even when physically apart.

Recent developments have focused on streamlining the process, from reducing rendering demands to enabling avatar creation via smartphone scans. Last week, Meta demonstrated a system capable of generating highly detailed head-only avatars from a simple selfie video, leveraging Gaussian splatting, a technique that has revolutionized volumetric rendering much like LLMs transformed chatbots. However, that system still required powerful PC hardware.

Now, Meta researchers have adapted their full-body avatar technology for the Quest 3’s mobile chipset. In a paper titled SqueezeMe: Mobile-Ready Distillation of Gaussian Full-Body Avatars, they detail how they compressed the computationally intensive models to run efficiently on standalone VR hardware. By employing both the NPU and GPU, the team achieved real-time rendering of three avatars at 72 FPS with minimal quality loss compared to PC versions.

Despite this progress, there are notable tradeoffs. The avatars rely on Meta’s original capture system, a sprawling array of over 100 cameras and hundreds of lights, rather than the newer smartphone-based scanning method. They also lack dynamic lighting, a key feature of PC-based Codec Avatars that ensures seamless integration into virtual environments.

The pressure is on for Meta to deliver after a decade of research, especially as Apple rolls out its Personas feature in visionOS 26. However, current Quest headsets lack the eye and face tracking necessary for Codec Avatars to function as intended. With the Quest Pro discontinued, Meta may need to introduce a new device with these capabilities or pivot to a simplified version for video calls on platforms like WhatsApp and Messenger.

Meta Connect 2025, scheduled for September, could reveal further updates on this ambitious project. For now, the ability to render multiple full-body avatars on standalone hardware signals a promising leap toward immersive social VR.

(Source: UploadVR)