Creating the Internet of Agents: The Missing Layers

▼ Summary

– A new Cisco Research paper argues the current network stack is unprepared for large-scale LLM agent communication, proposing two new layers above the application transport layer.

– Existing agent protocols standardize message formats but lack a shared semantic understanding, forcing agents into slow clarification loops and creating unpredictable behavior.

– The proposed Agent Communication Layer (L8) provides standardized interaction patterns and message structures so agents can reliably identify the type of communication before interpreting content.

– A second proposed layer, the Agent Semantic Negotiation Layer (L9), allows agents to agree on and validate messages against a shared, versioned context to reduce ambiguity and guesswork.

– This formalization introduces new security risks like malicious context injection, requiring countermeasures like signed definitions and semantic firewalls, while adoption faces challenges in performance, governance, and standards.

Cybersecurity experts are increasingly focused on the challenges of coordinating large language model agents across networks. A recent research initiative suggests our existing network infrastructure is ill-equipped for this emerging reality. The proposal centers on adding two new architectural layers above the standard application transport layer. These layers are designed to enable structured communication and, crucially, to allow agents to establish a shared understanding of meaning before they execute any actions.

The current approach relies on protocols that facilitate data exchange, tool calls, and task delegation between agents. While these protocols standardize message formats, a fundamental issue persists. Agents can receive and parse instructions, but they frequently interpret the same words differently. A simple reference to a “client meeting” or a “priority task” can lead to multiple interpretations, forcing agents into inefficient clarification cycles. This not only slows down operations but also makes the overall system’s behavior less predictable and more resource-intensive.

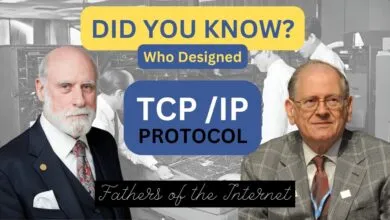

This problem highlights a significant gap in traditional network design. Foundational models like OSI, TCP, and IP were engineered for reliable packet delivery, not for mediating between autonomous entities that must align on semantic meaning. The situation is analogous to the early days of the web, before HTTP provided a standardized bridge between transport and application logic. The researchers argue that agent-based systems are at a similar inflection point, requiring new architectural standards to reach their full potential.

The first proposed addition is an Agent Communication Layer. Positioned above HTTP, this layer organizes emerging interaction patterns into a unified framework. It establishes common building blocks like standardized message envelopes, a registry of communicative intents (such as “request” or “propose”), and patterns for one-to-one or broadcast communication. The goal is to provide agents with a dependable way to identify the type of interaction occurring before they even process the content. This reduces ambiguity about the sender’s intent and streamlines task coordination, though it stops short of interpreting the actual meaning of the message.

The second, more innovative layer addresses the core semantic challenge. The Agent Semantic Negotiation Layer allows agents to discover, agree upon, and validate messages against a shared context. A context can formally define concepts, tasks, and required parameters, often grounded in machine-readable schemas and versioned for clarity. Once agents “lock” a context, all subsequent communication is checked for conformity. If a message is ambiguous, the layer prompts for structured clarification rather than leaving the agent to guess. This process reduces the interpretive burden placed on the LLM’s internal reasoning, enabling more deterministic and reliable multi-agent workflows, from simple orchestration to complex, group-wide coordination.

However, formalizing meaning introduces novel security considerations. The research outlines several new attack vectors. Adversaries could craft messages that technically conform to an agreed schema but are designed to mislead an agent’s reasoning. They might distribute corrupted context definitions to mislead entire agent populations. Another risk is semantic denial-of-service attacks, where systems are flooded with valid but computationally draining queries designed to exhaust inference resources rather than bandwidth.

To counter these threats, the proposal includes tailored security measures. Signed context definitions would prevent tampering, while semantic firewalls would inspect content at the conceptual level, enforcing rules about context usage. Rate limiting could manage computational load, and a trust model akin to digital certificate authorities would help agents verify which contexts are safe to adopt.

Adopting this layered architecture faces several hurdles. The proposal is additive, integrating concepts from existing protocols, but widespread implementation depends on overcoming challenges in performance, governance, and standardization. Agents will need efficient methods for context discovery and authentication. Organizations will require tools to manage context lifecycles, including version control and the retirement of flawed definitions. As multi-agent systems become more prevalent in areas like supply chain logistics and collaborative analysis, the lack of such formal layers could hinder their reliability and scalability. This framework aims to provide a foundational structure that minimizes confusion and supports the safe, predictable coordination of increasingly complex autonomous work.

(Source: HelpNet Security)