AWS Simplifies Custom LLM Creation with New Features

▼ Summary

– AWS announced new serverless model customization in Amazon SageMaker AI, allowing developers to build custom models without managing compute infrastructure.

– Developers can access these capabilities through either a self-guided interface or a new agent-led preview that responds to natural language prompts.

– The customization is available for Amazon’s Nova models and certain open-source models like DeepSeek and Meta’s Llama.

– AWS also launched Reinforcement Fine-Tuning in Amazon Bedrock, automating the model customization process from start to finish.

– These tools aim to help enterprise customers create differentiated, custom frontier models, which is a key focus area for AWS to gain a competitive advantage.

Amazon Web Services is making it significantly easier for businesses to develop their own specialized large language models. The cloud giant unveiled a suite of new features within its Amazon Bedrock and Amazon SageMaker AI platforms at the re:Invent conference. These tools are specifically engineered to streamline the process of building and fine-tuning custom LLMs, addressing a growing demand for differentiated AI solutions.

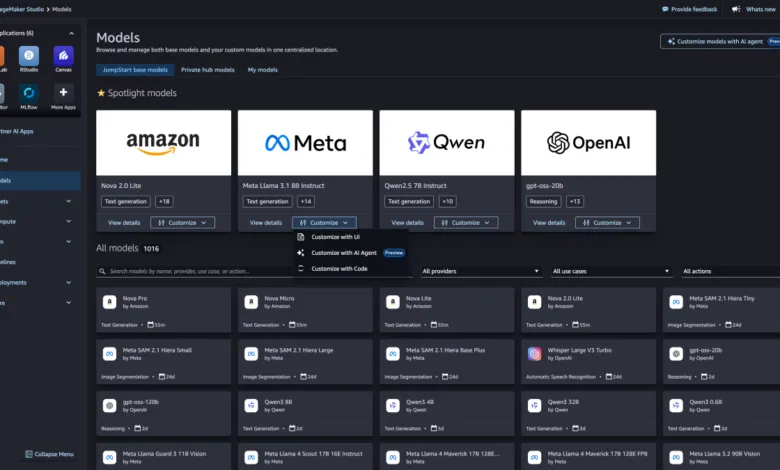

A key innovation is the introduction of serverless model customization in SageMaker. This feature removes the traditional burden of managing underlying compute infrastructure. Developers can now initiate a model-building project without provisioning servers or worrying about scaling resources. According to AWS executives, users can access this capability through two distinct pathways: a straightforward point-and-click interface or an agent-led experience where they instruct the platform using conversational language prompts.

This functionality is particularly powerful for industry-specific applications. For instance, a healthcare organization could leverage its own labeled data to train a model on complex medical terminology. By selecting the desired technique within SageMaker, the platform automatically handles the fine-tuning process. Currently, this serverless customization supports Amazon’s proprietary Nova models and select open-source architectures like DeepSeek and Meta’s Llama, provided their model weights are publicly accessible.

Parallel to this, AWS is launching Reinforcement Fine-Tuning in Bedrock. This offering automates the entire model customization workflow. Developers simply choose either a predefined reward function or a custom workflow, and Bedrock manages the process from beginning to end. This hands-off approach is designed to accelerate development cycles and reduce operational complexity.

These announcements underscore a clear strategic focus for AWS on frontier LLMs and tailored model development. The company recently introduced Nova Forge, a premium service where AWS engineers build custom Nova models for enterprise clients at an annual cost. This push is a direct response to customer inquiries about achieving a competitive edge. When competitors have access to the same foundational models, the ability to create a uniquely optimized AI, trained on proprietary data and aligned with specific brand needs, becomes a critical differentiator.

While AWS’s own AI models currently trail behind leaders like Anthropic, OpenAI, and Gemini in enterprise adoption, the company is betting that superior customization tools will change that dynamic. By lowering the technical barriers and infrastructure overhead, AWS aims to position itself as the preferred platform for organizations seeking to deploy highly specialized, proprietary artificial intelligence.

(Source: TechCrunch)