Writers Resist AI-Generated Story Drafts

▼ Summary

– Some journalists, like tech reporter Alex Heath, now routinely use AI to draft articles from their notes and transcripts.

– Publications like WIRED have firm policies against AI-generated text, but the practice is becoming more common as AI prose improves.

– Book publisher Hachette retracted a novel that relied too heavily on AI, showing ongoing industry efforts to police such content.

– Reporters using AI argue it eliminates drudgery and is a tool, not a replacement for their thinking and reporting process.

– The use of AI in journalism has caused professional and personal backlash for some practitioners, despite editorial defenses of the practice as “assistance.”

The legendary sportswriter Red Smith famously described his craft as sitting down to bleed. Today, a growing number of journalists are finding a less painful alternative, sitting down at a laptop to let an AI writing assistant generate the first draft. Recent profiles of reporters who openly use tools like Claude or ChatGPT to produce their work signal a quiet but significant shift in newsrooms, challenging long-held taboos about the sanctity of human prose.

For years, the consensus across reputable media was clear: using large language models to create publishable text was strictly off-limits. Major outlets, including WIRED, maintain firm policies against it. The book publishing industry, wary of a flood of AI-generated manuscripts, has acted to remove novels suspected of heavy LLM use. Yet the walls are beginning to crumble. As AI-generated text becomes increasingly sophisticated and harder to distinguish from human writing, its convenience and cost savings present a powerful temptation, threatening to mainstream the practice.

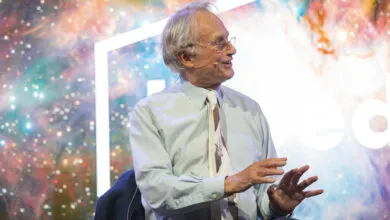

This development has provoked strong reactions, particularly from writers for whom the arduous process is inseparable from the result. The reporters at the center of the controversy, however, are not retreating. Tech journalist Alex Heath, who uses AI to draft articles from his notes and transcripts, views the pushback as inevitable but misguided. “I see AI as a tool,” Heath states. “The only thing that’s replaced is drudgery that I didn’t want to do anyway.” He argues that he maintains a connection with his audience, having trained the AI to mimic his style and supplementing its output with personal commentary.

Heath even describes instances of nearly “one-shotting” columns, where the AI’s initial draft required minimal editing. He rejects the notion that this bypasses the essential thinking of writing. “I’m just getting rid of that very messy, painful, zero-to-one blank page,” he explains. For many traditionalists, however, that struggle is precisely where effective communication and clarity are forged, a critical aspect of the craft that cannot be outsourced.

The scrutiny extends beyond public criticism. Fortune reporter Nick Lichtenberg, featured in a Wall Street Journal article for his heavy reliance on AI, acknowledged personal strain. “I’m feeling a strain in close and personal relationships,” he told the Reuters Institute. In response, Fortune’s editor-in-chief, Alyson Shontell, emphasized a crucial distinction. She clarified that Lichtenberg’s work is “ai assisted versus ai written,” involving substantial original reporting, analysis, and reworking. Her statement underscores the industry’s delicate balancing act: defining acceptable AI assistance in journalism while defending the irreplaceable value of human insight and investigation.

The fundamental tension remains unresolved. Is AI merely a powerful tool for eliminating grunt work, or does its use in drafting narrative inherently diminish the writer’s voice and intellectual engagement? As the technology advances, news organizations and individual writers will be forced to answer that question, redefining where the line between assistance and replacement truly lies.

(Source: Wired)