Meta Cracks Down on Unoriginal Content, Following YouTube’s Lead

▼ Summary

– Meta is cracking down on accounts sharing “unoriginal” content, including reused text, photos, or videos, and has already removed 10 million impersonating profiles this year.

– Accounts engaged in spam or fake engagement face penalties like reduced content distribution and loss of monetization access for repeated violations.

– Meta’s update follows YouTube’s recent policy clarification on unoriginal content, with both platforms targeting AI-generated low-quality media (“AI slop”).

– Meta is testing a system to link duplicate videos to original content and warns creators against reusing clips or relying on unedited AI captions.

– The changes roll out gradually, and creators can check post-level insights or Support home screens to monitor distribution or penalty risks.

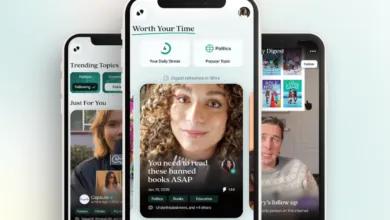

Meta is tightening its policies against unoriginal content across Facebook, mirroring recent moves by YouTube to combat low-quality reposts and AI-generated material. The company revealed it has already removed roughly 10 million impersonator accounts this year while restricting another 500,000 for spam or fake engagement. These measures include limiting visibility and blocking monetization opportunities for repeat offenders.

The policy shift focuses on accounts that systematically republish others’ work without meaningful transformation, whether through outright copying or superficial edits. Creators who engage with trends, share reactions, or provide original commentary won’t face penalties, Meta clarified. However, duplicate videos will see reduced distribution to prioritize the original source, and the platform is testing a feature that redirects viewers to the authentic creator via embedded links.

This update arrives amid growing frustration from users and small businesses over Meta’s automated enforcement systems, which have reportedly disabled legitimate accounts without clear recourse. A petition demanding fixes has gathered nearly 30,000 signatures, though the company hasn’t publicly addressed the backlash.

The crackdown also indirectly targets the surge of AI-generated “slop”, low-effort content stitched together using generative tools. While Meta’s announcement avoids explicitly naming AI, its guidelines discourage practices like watermarking reused clips or relying on unedited automated captions. Instead, it emphasizes “authentic storytelling” over recycled material.

Over the coming months, creators will gain access to new dashboard insights explaining distribution limits or monetization risks. Meta’s transparency reports note that fake accounts still represent 3% of global monthly active users, with over a billion removed early this year.

Meanwhile, the platform continues shifting moderation responsibilities to crowdsourced systems like Community Notes, following X’s model, rather than direct fact-checking. As AI tools make mass-produced content easier to create, Meta’s latest steps aim to preserve incentives for originality, though balancing enforcement with creator support remains a challenge.

(Source: TechCrunch)