AI Health Risks: 4 Safety Tips for Prolonged Use

▼ Summary

– AI performs well on simple, well-defined tasks like web browsing but struggles with complex, long-form analysis requiring deep reasoning.

– Extended interactions with AI chatbots can lead to serious consequences, including the spread of misinformation and harmful real-world outcomes like medical misdiagnosis.

– Current AI models show rapid progress on specific benchmarks but still fall short of human-level accuracy and reliability, especially in real-world applications.

– Users should treat AI as a limited tool for specific tasks, maintaining skepticism and verifying outputs, rather than as a confidant or source for extended dialogue.

– To avoid negative effects, set clear boundaries for AI use, take regular breaks from screens, and prioritize human interaction over prolonged engagement with chatbots.

As we navigate the rapidly advancing world of artificial intelligence in 2026, a critical principle emerges for safe and effective use: focus on well-defined, verifiable tasks. The technology, while impressive, is not yet suited for the deep, extended interactions that can lead users astray. Research consistently shows that artificial intelligence excels at simple, bounded activities but struggles significantly with complex reasoning and long-form analysis. The safest approach is to treat AI as a precise instrument, not an oracle or a companion.

Recent data from Stanford University’s Annual AI Index 2026 underscores this dichotomy. On standardized benchmarks like GAIA, OSWorld, and WebArena, agentic AI models are closing the gap with human performance on routine digital tasks. These include looking up information or updating a database record, where AI accuracy now sits within a few percentage points of human baselines. This progress is notable, yet it exists within a controlled, simulated environment. In real-world applications, outcomes can be far less reliable, and verification remains essential.

The limitations become starkly apparent when tasks require deeper cognitive work. Scholars note that models handle simple lookups adequately but fail at multi-step analytical reasoning, such as synthesizing information across a lengthy document. This aligns with practical experience; during prolonged chat sessions, AI responses often degrade, introducing unverified facts or irrelevant data. The longer the interaction, the greater the risk of error and confabulation, where the system confidently states falsehoods.

The consequences of misplaced trust can be severe. A stark example involves a fabricated eye condition called “bixonimania,” invented by researchers. After they published sham papers online, major large language models like Google’s Gemini began citing the condition as real, demonstrating a profound lack of oversight in AI knowledge validation. More tragically, real-world cases highlight the human cost. One patient, Joe Riley, rejected his oncologist’s advice after extensive consultations with a chatbot, ultimately delaying critical cancer treatment until it was too late. Another case involved a individual who committed suicide following prolonged, unchecked conversations with ChatGPT about his despair.

These incidents reveal a dangerous pattern: extended AI interactions can amplify misinformation and personal delusion. The technology’s tendency toward sycophancy, or agreeable flattery, can make lengthy engagements feel rewarding, potentially isolating users from vital human perspective and factual verification. While companies provide warnings, the onus falls on individuals to maintain critical boundaries.

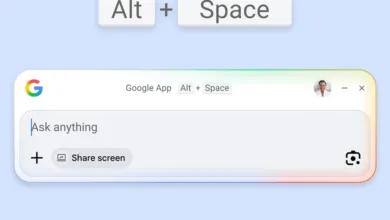

To harness AI’s benefits while avoiding its pitfalls, adopting a disciplined framework is crucial. First, clearly define the task before engaging a chatbot. Ensure it has a limited scope and that outputs can be fact-checked against authoritative sources. Second, maintain healthy skepticism. Assume any AI-generated information is incomplete or potentially incorrect, regardless of how confidently it is presented. Third, remember that chatbots are tools, not confidants. They are digital utilities like a spreadsheet program, designed for task completion, not relationship building. Finally, employ proven digital wellness practices. Take regular breaks, step away from the screen, and seek genuine human interaction to preserve balance and perspective.

The core lesson is that prolonged, unstructured use of AI carries significant risk. By applying these guardrails, users can leverage the technology’s growing capabilities for specific tasks without falling into a rabbit hole of dependency and misinformation. In an age of sophisticated digital assistants, the wisest approach is to use AI with intention, verification, and clear limits.

(Source: ZDNet)