Databricks Co-Founder Wins ACM Award, Declares AGI Era

▼ Summary

– Matei Zaharia, Databricks co-founder and CTO, was surprised to receive the 2026 ACM Prize in Computing for his collective contributions.

– He created the open-source project Spark during his PhD, which dramatically sped up big data processing and became highly influential.

– Under his engineering leadership, Databricks grew into a multi-billion dollar company serving as a data foundation for AI.

– Zaharia believes we should stop applying human standards to AI models, arguing that capabilities like passing knowledge tests do not equate to human-like general intelligence.

– He is most excited about AI’s potential to automate and enhance research and engineering tasks by leveraging its unique strengths in processing information.

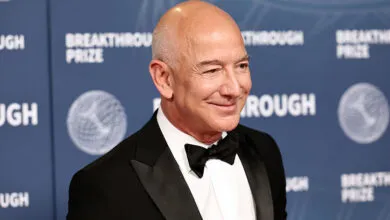

The Association for Computing Machinery recently announced its 2026 prize recipient, and the news came as a genuine surprise to the winner himself. Databricks CTO Matei Zaharia nearly overlooked the email informing him he had won the prestigious ACM Prize in Computing. The award recognizes his foundational contributions to the field, most notably the creation of Apache Spark during his PhD studies at UC Berkeley. Under the guidance of professor Ion Stoica, Zaharia developed a revolutionary method to accelerate slow, cumbersome big data processes. At a time when big data was the dominant technological force, Spark fundamentally reshaped the industry’s approach, catapulting the then-28-year-old to prominence.

Since those early days, Zaharia has led engineering efforts at Databricks, steering the company from a cloud storage innovator to a $134 billion data and AI foundation. The firm has raised over $20 billion and achieved $5.4 billion in revenue, a testament to its market-defining trajectory. The ACM award includes a $250,000 prize, which Zaharia plans to donate to a charity yet to be selected. Rather than dwelling on past achievements, his focus remains squarely on the future, a future he believes is unequivocally dominated by artificial intelligence.

Zaharia posits a provocative idea: AGI is already here. The issue, he suggests, is our perspective. We fail to recognize it because we insist on judging AI by human benchmarks. “I think the bigger point is we should stop trying to apply human standards to these AI models,” he stated. A human lawyer must integrate deep understanding to pass the bar, but an AI can simply ingest vast factual databases. Correctly answering knowledge-based questions does not equate to possessing general intelligence or comprehension.

This tendency to anthropomorphize AI carries significant risks. He points to popular AI agents like OpenClaw as a double-edged sword. While their ability to automate complex tasks is powerful, their design as a trusted human analog creates a security nightmare. When users grant an agent access to passwords or logged-in browser sessions, they open the door to potential hacking or unauthorized financial actions. The core problem is a fundamental misunderstanding. “Yeah, it’s not a little human there,” Zaharia cautions.

His vision for AI’s most transformative application lies in research and engineering automation. Just as tools for vibe coding democratized software prototyping, he foresees a future where hallucination-free AI research assistants become universally accessible. “Not that many people need to build applications, but lots of people need to understand information,” he observes. The goal is to leverage AI’s unique strengths, moving beyond text and image analysis to interpret signals from radio waves to molecular simulations. He is particularly excited by students using AI to model molecular-level changes and predict outcomes, a process he terms AI for search and research.

The ultimate shift, according to Zaharia, will come from designing systems that capitalize on what machines do best, freeing human intellect for higher-order tasks. This means creating AI that can diagnose a car’s mechanical issue from a sound, analyze non-traditional data streams, or accelerate scientific discovery, fundamentally changing how we interact with and interpret the world’s information.

(Source: TechCrunch)