Topic: ai accelerators

-

U.S. Senators Push Bill to Curb AI Chip Exports to China

The SAFE Chips Act of 2025 proposes to codify existing U.S. export restrictions on advanced AI chips for 30 months, preventing companies like Nvidia and AMD from selling their most powerful processors to China and other designated nations. The legislation defines restricted chips by mirroring cur...

Read More » -

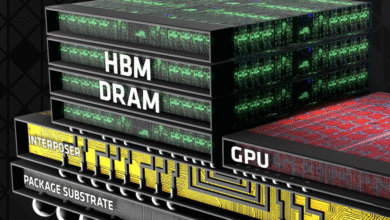

HBM4 Memory Spec Aims for Lower Costs, Not to Replace GDDR

The SPHBM4 memory standard aims to deliver HBM4-level bandwidth with a narrower 512-bit interface and organic substrate compatibility, targeting cost reduction and accessibility for specific applications, not gaming GPUs. It addresses HBM's capacity limitation by using a 4:1 serialization scheme ...

Read More » -

Score the ASRock Radeon RX 9070 XT for $599 MSRP Before It's Gone

The ASRock Challenger Radeon RX 9070 XT offers flagship-level gaming performance at a discounted price of $599, making it a strong value compared to more expensive competitors. Built on the Navi 48 GPU architecture, it features 64 RDNA 4 compute units, 20 Gbps GDDR6 memory, and a 304W power ratin...

Read More » -

Nvidia's Q4: A Make-or-Break Moment for AI Hardware

Nvidia's upcoming quarterly report is a key indicator for the AI hardware sector, with its dominant role in powering AI models having fueled its massive valuation, but the focus is now shifting to the sustainability of the broader AI investment cycle. Analysts expect another quarter of staggering...

Read More » -

TSMC's HBM4 Revolution: 3nm Dies to Triple Performance by 2027

HBM4 and HBM4E will dramatically transform high-bandwidth memory, promising to triple performance by 2027 through a 2048-bit interface and the use of advanced 3nm-class logic dies to meet AI and HPC demands. A key innovation is the shift to logic-process base dies, produced by partners like TSMC,...

Read More » -

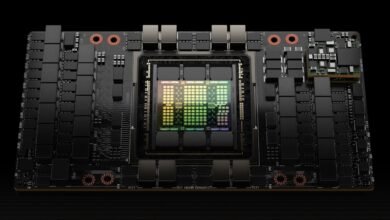

SK hynix Unveils HBM4: 2,048-Bit Interface for Next-Gen AI Accelerators

SK hynix has completed development of its next-generation HBM4 memory, which exceeds JEDEC performance standards by 25% and is aimed at future AI accelerators from companies like AMD and Nvidia. The HBM4 features a 2,048-bit interface—double the previous bus width—and supports a data transfer rat...

Read More » -

OpenAI Teams with Broadcom to Develop Custom AI Chips

OpenAI is partnering with Broadcom to develop custom AI chips, reducing reliance on Nvidia and enhancing performance for models like ChatGPT and Sora. The collaboration involves deploying 10 gigawatts of AI accelerators, highlighting the vast computational needs for training next-generation AI sy...

Read More » -

Micron, Samsung, SK hynix HBM Roadmaps: HBM4 and Beyond

High Bandwidth Memory (HBM) is critical for AI and high-performance computing, with industry leaders like Micron, Samsung, and SK hynix advancing next-gen HBM3E, HBM4, and HBM4E technologies to meet growing demands. HBM's ultra-wide interfaces (up to 2048-bit in HBM4) and vertical stacking (e.g.,...

Read More » -

Nvidia Boosts Open-Source AI with Acquisition and New Nemotron Models

Oracle Cloud Infrastructure has launched new A4 Standard instances powered by Ampere's AmpereOne M silicon, offering both virtual machine and bare metal configurations despite Oracle divesting its stake in Ampere. The A4 instances provide up to 35% higher core-for-core performance than the previo...

Read More »