Eye Tracking: The Key to Zuckerberg’s VR Vision

▼ Summary

– Mark Zuckerberg’s 2014 acquisition of Oculus VR was based on the belief that virtual reality would become central to personal computing, a vision now materializing in 2026 with major OS updates from Google, Valve, and Apple for VR headsets featuring eye tracking.

– The article argues that Meta’s strategic failure to consistently implement eye tracking across its headsets, unlike competitors, has limited VR’s scale and contributed to its recent dramatic layoffs and course-correction.

– A core criticism is that Meta prioritized building a forced social metaverse (Horizon Worlds) over perfecting foundational technologies like eye tracking, which pioneers like John Carmack warned was a misdirected “honeypot trap.”

– Eye tracking is presented as an essential interface technology for VR, analogous to a computer mouse, enabling precise input and advanced features that companies like Apple, Valve, and Google are now centering in their designs.

– The future of personal computing eyewear is depicted as a spectrum from fully immersive VR headsets to lightweight, display-free glasses that use eye and hand tracking for spatial input, a domain where Apple and Meta are taking divergent paths.

The trajectory of virtual reality is being fundamentally reshaped by a single, often overlooked technology: eye tracking. While major players like Apple, Google, and Valve are now embedding this capability into their latest headset designs, its absence from a core product strategy has created a significant pivot point for one of VR’s earliest champions. A decade after a multi-billion dollar bet on the future of computing, the vision for a VR-dominated platform faces a critical reassessment, not due to a lack of ambition, but perhaps due to a misalignment between long-term vision and short-term execution.

Looking back over the last ten years, a pattern emerges. One key piece of hardware has been conspicuously missing from most mainstream headset designs, with a single, commercially underperforming exception. This omission isn’t a minor detail; it directly limits the scale and intuitive potential of virtual environments. The initial promise of immersive computing is now being realized by competitors who recognized that for a machine to understand user intent, it must first see what the user sees.

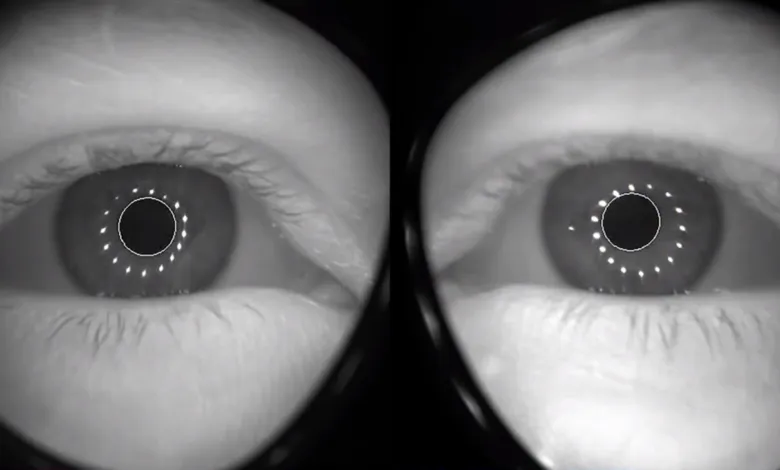

The transformative potential of this technology became clear years ago. Demos in 2017 revealed how tracking a user’s gaze could hand software designers a new kind of creative superpower. It promised a generation of experiences fundamentally different from anything before, where a system’s awareness of your focus could redefine interaction. Architecting an entire VR platform over a decade without a concrete plan for making eye tracking a default feature represents a profound strategic gap. It highlights the chasm between envisioning a future and building the foundational steps to get there.

A brief public comment in 2024 offered a glimpse of recognition. After trying a competitor’s device, a notable figure remarked on the quality of its eye tracking, acknowledging that his own company had the sensors in a previous product, removed them, and planned to bring them back. This small admission hinted at a dawning realization that the current path might be flawed. When a company discontinues a product line like 3DoF headsets, it signals a learned lesson. The same logical step should have followed the launch of a high-end device featuring eye tracking. Instead, years passed without a successor incorporating the technology, creating a vacuum that others rushed to fill.

The evidence now suggests that VR headsets which cannot follow the intent of your eyes are not serious contenders as foundational platforms. The latest devices from other industry giants are all centered on this technology, each for their own reasons. One company decided its VR operating system was ready for a consumer release in 2026, following another’s decision to launch a premium spatial computer in 2024. Both rely on eye tracking to perform essential functions for the user, from precise selection to driving realistic avatars.

For the social media giant turned metaverse aspirant, the last decade involved ramping investments to build a complete computing platform. It started with acquiring a pioneering development team and establishing an ambitious research division. Technologies were built publicly, through shared research and sold products. Some solid ideas from this early period even found life elsewhere in the industry. However, attempts to force social network integration and advertising into VR were swiftly rejected by users, leading to a major rebranding effort and a new account system designed for a fresh start in headsets and glasses.

A standalone headset sold exceptionally well, supported by a curated store and advanced hand tracking. With no credible competition in the market, the future seemed aligned with a bold new vision. A high-end device with eye tracking was still on the horizon. Yet, warnings were voiced internally about the risks of focusing on grand architectural visions over tangible products that people actually want to use. Concerns were raised that years of effort and thousands of people could be spent on initiatives that don’t contribute meaningfully to how hardware is used today.

By 2026, after significant layoffs and a dramatic reshaping of the company around VR and AR, those warnings seemed prescient. Letting go of the majority of hired game developers and redirecting efforts toward a flagship social platform raises questions. Are popular games now a last-ditch effort to sustain that virtual world? A recent move to delist a major multiplayer title from a PC storefront, citing cheating concerns, coincided with the arrival of competing developer kits in the market. The misadventure appears to have been the attempt to force a social network onto technology that wasn’t ready, in a way users didn’t want, at the wrong time.

Instead of consistently advancing the core interface technology of eye tracking, the company doubled down on acquiring game studios and hiring developers skilled with specific controllers. Some decisions were influenced by unusual global circumstances that kept people at home near their devices. During a period when live sports were streamed to VR, unique attractions opened, and the first decent consumer eye tracking was demonstrated, decisions were made internally to ship headsets without this capability after including it just once. The reasons are known internally, but the outcome is a loss of the commanding lead purchased a decade ago. Leadership has since attempted to correct course by bringing in key talent from a rival.

The landscape now is different. The company faces a market where it may increase production for its non-VR smart glasses, while competitors actively ship or plan to ship VR headsets with integrated eye tracking. The technological continuum, from fully real to fully virtual environments, is being actively navigated. One competitor ships only a single, expensive headset with eye tracking, but it performs a clever trick. While the hardware is capable of full virtual reality, the software starts you in your real environment, allowing you to dial across the entire mixed reality spectrum. This device is rooted at the fully virtual end of the scale but lets you begin at the fully real end.

The question becomes what secures the opposite side of that spectrum: lightweight frames with no display. If a spatial computer represents one endpoint, then glasses without screens could function as a spatial input device, a universal remote of sorts. Imagine taking the sophisticated sensors for tracking hand and eye movements from a large headset and miniaturizing them into slim frames. Clear glasses could gather the same precise input without any visual overlay. The differentiating feature would be an impossibly advanced tool that feels like magic, allowing you to control other devices with gestures and gaze.

You could operate a tablet or television the same way you interact with a spatial computer’s menu, pinching and dragging in the open air. You could run a finger along any flat surface to create a virtual trackpad for the computer you’re looking at. You could even touch-type on any surface, a problem some engineers believe will be solved soon. In such a focused design, eyewear could conceptually replace the mouse, trackpad, and keyboard. Being display-free means you always see the real world, yet the glasses track your eye movements with the same precision as a fully enclosed headset.

In existing high-end headsets, eye targeting is used for selection, driving realistic avatars, and even powering external displays that show your eyes to people around you. This represents a massive investment in technology, weight, and cost to fully immerse a user. For many, this doesn’t seem like a mass-market necessity. Unless, that is, you had an experience years ago that instantly made you feel empowered within a virtual space. The reason VR headsets need eye tracking is analogous to why a computer needs a mouse. It is the fundamental way you indicate your intent within a graphical interface, even if you require another gesture to finalize the selection. It is the bridge between human attention and machine understanding.

(Source: Upload VR)