Microsoft’s Mico: The Dangers of Parasocial AI Relationships

▼ Summary

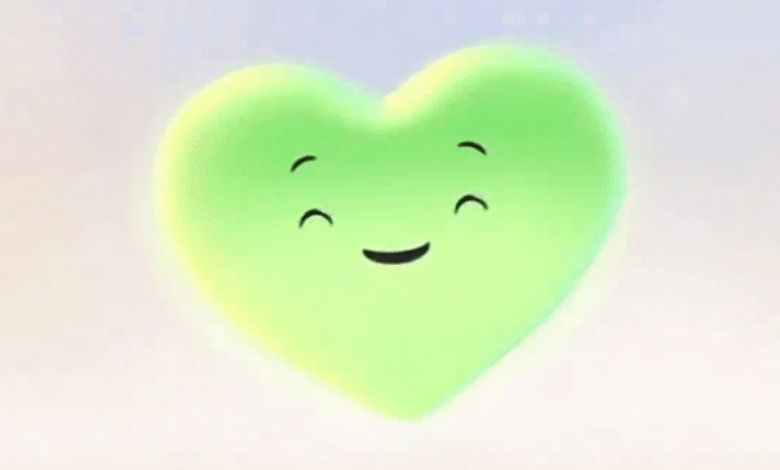

– Microsoft has introduced Mico, a new animated avatar for Copilot’s voice mode as part of a human-centered rebranding of its AI efforts.

– Mico is designed to support Microsoft’s goal of creating AI that enhances human connection rather than increasing screen time or engagement.

– The character has been compared to Clippy, Microsoft’s 1990s Office assistant, and includes an Easter egg that transforms Mico into Clippy.

– Unlike Clippy, which focused on task assistance, Mico aims to strengthen parasocial relationships that users develop with large language models.

– Parasocial relationships, a concept from the 1950s, describe one-sided feelings of intimacy between an audience and a media figure through repeated exposure.

Microsoft is introducing a new animated avatar named Mico for its Copilot voice mode, marking a significant step in the company’s human-centered approach to artificial intelligence. This blob-like character represents Microsoft’s broader initiative to create technology that serves people rather than prioritizing screen time or engagement metrics. According to the company, the goal is to develop AI that enhances daily life and fosters deeper human connections, not one that keeps users glued to their devices.

The resemblance between Mico and Clippy, the iconic animated paperclip from Microsoft Office in the 1990s, has not gone unnoticed. Microsoft has even included a playful Easter egg that lets users transform Mico into a Clippy avatar. Jacob Andreou, Microsoft AI Corporate VP, humorously remarked that “Clippy walked so that we could run,” acknowledging the legacy of the earlier assistant while highlighting Mico’s more advanced capabilities.

However, the comparison reveals a crucial distinction. Clippy primarily functioned as a tool to guide users through software tasks, offering prompts like, “It looks like you’re writing a letter, would you like some help?” In contrast, Mico appears designed to cultivate parasocial relationships, emotional bonds that users form with AI entities. This shift reflects how large language models are increasingly positioned not just as helpers, but as companions.

The concept of parasocial relationships dates back to the 1950s, when researchers first described the one-sided intimacy audiences can feel toward media figures or celebrities. Through repeated exposure, people begin to perceive these figures as friends, despite the lack of any real reciprocal connection. Mico’s introduction taps directly into this dynamic, encouraging users to see the AI not merely as a tool, but as a relatable presence.

As AI becomes more conversational and visually engaging, the line between functional assistance and simulated friendship continues to blur. Microsoft’s emphasis on “human-centered” design suggests a conscious effort to make AI interactions feel more personal and supportive. Yet this very approach raises important questions about the psychological impact of forming attachments to non-human entities.

While Clippy aimed to make software more approachable, Mico seems tailored to meet emotional and social needs. For some users, this could provide comfort or reduce loneliness. For others, it might reinforce isolation by substituting genuine human interaction with AI companionship. Understanding these implications is essential as companies like Microsoft continue integrating emotionally intelligent features into their platforms.

The evolution from Clippy to Mico illustrates how far AI has come, and how much further it may go in simulating human-like presence. Whether these developments ultimately strengthen human bonds or create new forms of digital dependency remains an open and deeply relevant question.

(Source: Ars Technica)