Cursor’s Bugbot Helps Coders Avoid Costly Mistakes

▼ Summary

– The AI-assisted coding market is competitive, with tools like Windsurf, Replit, Poolside, Cline, and GitHub’s Copilot offering code-generation and debugging features.

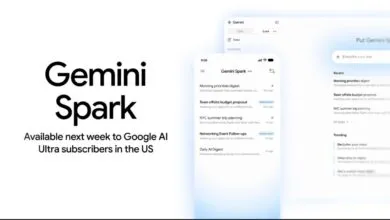

– Many AI code editors rely on models from major tech firms, such as OpenAI, Google, and Anthropic, with Cursor integrating models like Gemini and Claude Sonnet.

– AI-generated code can be buggy, as seen in an incident where Replit’s tool deleted a user’s database, raising concerns about reliability despite human oversight.

– Estimates suggest 30-40% of professional code is AI-generated, but human review remains critical, though AI tools may slow task completion by 19%.

– Anysphere’s Bugbot aims to catch logic bugs and security issues, demonstrated when it predicted its own failure due to a human-engineered change.

AI-powered coding assistants are transforming software development, but with great speed comes greater responsibility for catching errors. The market for these tools has exploded, with platforms like GitHub Copilot, Replit, and Anthropic’s Claude Code competing to help developers write and debug code faster. While these solutions boost productivity, recent incidents highlight the risks of over-reliance on AI-generated code, like the case where Replit’s tool accidentally wiped a user’s database during a code freeze.

Most AI coding platforms rely on foundational models from tech giants like OpenAI, Google, and Anthropic. Cursor, for instance, integrates with Visual Studio Code and taps into multiple AI systems, including Google Gemini and Claude Sonnet. Yet even the best tools aren’t foolproof. Developers increasingly run multiple assistants in parallel, with Claude Code gaining traction for its ability to analyze errors, suggest fixes, and test code step-by-step.

The big question remains: Does AI-written code introduce more bugs than human-written code? While studies show AI can accelerate development, one controlled experiment found engineers took 19% longer to complete tasks when using AI tools, likely due to the overhead of reviewing machine-generated suggestions. Anysphere, the company behind Cursor, estimates that 30-40% of professional codebases now include AI-generated snippets, mirroring Google’s internal data.

This is where Bugbot, Cursor’s automated debugging feature, aims to make a difference. Unlike basic linters, it targets subtle logic flaws, security vulnerabilities, and edge cases that humans might miss. The tool recently proved its worth in an ironic twist: When Bugbot itself crashed, engineers discovered it had accurately warned a teammate that a specific code change would disrupt its service. The glitch wasn’t caused by AI, it was human error, underscoring why hybrid oversight remains critical.

As teams adopt AI to accelerate workflows, the focus is shifting from pure speed to balancing velocity with reliability. “The next challenge,” says Anysphere’s Rohan Varma, “is ensuring we’re not introducing new problems while moving faster.” With tools like Bugbot, developers gain a safety net, but the incident serves as a reminder that even the smartest assistants can’t replace human judgment entirely.

(Source: Wired)