Prophet for Non-Linear SEO Seasonality Modeling

▼ Summary

– SEO forecasting is complex because search behavior is non-linear and data is distorted by seasonality, anomalies, SERP changes, and measurement issues.

– Baseline models (linear regression, exponential smoothing, moving averages) are useful for descriptive purposes but not reliable for decision-making in volatile search environments.

– LLMs are unsuitable for SEO forecasting because they assume linear data and optimize for linguistic plausibility rather than statistical accuracy.

– A robust forecasting approach requires cleaning data by replacing anomalies with expected values (trend + seasonal) and using models like Prophet to handle seasonality and external regressors.

– The article demonstrates a Python workflow using STL decomposition for anomaly detection and Prophet to produce a 90-day click forecast adjusted for known data distortions like the Google Search Console logging issue.

Forecasting SEO performance requires estimating what’s next based on what’s already happened, but search behavior rarely follows a clean, predictable path. Seasonal demand, sudden anomalies, shifting SERP layouts, and data tracking errors can all warp your numbers, making it nearly impossible to generate reliable forecasts with basic methods.

That’s why relying on linear regression, exponential smoothing, or asking a large language model to simply project trends from past data often falls short. To build forecasts that actually hold up, you need to account for seasonality, detect anomalies, and use models designed for non-linear search data.

Decision-makers depend on forecasts to justify budgets and synchronize expectations across teams. Stakeholders want forward-looking estimates, finance needs revenue projections, and roadmaps require clarity on expected returns. Yet the real value of forecasting has eroded significantly.

AI Mode and AI Overviews have created a major disconnect between clicks and impressions. LLM-driven scrapers have inflated bot activity, skewing impression data in reporting tools. On top of that, Google reported a logging issue affecting Search Console impression data starting in May 2025. As a result, many forecasts now serve more as reassurance than actionable guidance. They protect decision-makers from scrutiny while failing to reflect the business’s actual operating reality.

From a data analytics perspective, if search performance followed a normal distribution, you could confidently use linear regression, exponential smoothing, or a simple moving average. But the typical SEO forecast still rests on assumptions that don’t hold in organic search: stable trends, normal distributions, and consistent input-output relationships.

Today’s AI landscape forces a fundamental rethink. Search outcomes are becoming highly volatile and probabilistic. A 10% increase in effort no longer guarantees a proportional 10% boost in traffic. Several structural factors explain this shift: long-tail traffic distribution (a few pages drive most traffic), binary user behavior (metrics like CTR hinge on yes/no interactions), and zero-click search impact (high rankings don’t guarantee clicks as more queries are resolved directly in the SERP).

If you must forecast, do it properly. Baseline models still have their place: linear regression for directional trends, exponential smoothing for short-term adjustments, and moving averages for noise reduction. But treat them as descriptive tools, not decision-making engines. To make forecasting genuinely useful, you need to go further.

Why LLMs aren’t the answer to SEO forecasting

LLMs and MCP connections only amplify the inefficiencies already mentioned. Two structural problems stand out. First, they assume data behaves linearly. Pre-configured prompts implicitly treat SEO data as smooth and continuous, ignoring the seasonality, cyclical demand, and structural breaks that dominate search behavior. Second, they optimize for plausibility, not statistical accuracy. LLMs are probabilistic text generators, not forecasting models. They produce confident but ungrounded outputs that lack the business and domain context needed to interpret anomalies. No matter how well-engineered the prompt, the system can still hallucinate because it prioritizes linguistic plausibility over statistical validity. Forecasting requires explicit handling of seasonality, non-linearity, and critical interpretation. These analytical responsibilities can’t be abstracted away through prompting alone.

How to do an SEO forecast that accounts for seasonal effects

Asking the right questions is often the hardest part. SEO forecasts are usually requested by enterprise stakeholders or pushed by agencies during pitches. The subject typically revolves around one of these search indicators: clicks, impressions, rankings, or CTR. For this article, we’ll use Python to forecast synthetic clicks for a fictitious website influenced by seasonal demand.

Start by retrieving historical data from Google Search Console via the API or Google BigQuery. Assess the tradeoff between cost, resources, time, and data sampling. Using an API to pull as much historical data as possible, for example through Search Analytics for Sheets, can be effective. Set up a Google Colab notebook, install dependencies, load your dataset with date and clicks columns, and convert the date column into a datetime index. Enforce daily frequency and fill missing data gaps using interpolation.

After preprocessing, assess stationarity. The smaller the p-value from the Augmented Dickey-Fuller test (below 0.05), the more confident you can be that patterns aren’t random. If the p-value isn’t convincing, the series isn’t stationary, and seasonality likely plays a role. Assuming SEO data is stationary is a flawed heuristic. Instead, decompose the time series and model seasonality using STL decomposition. This separates true performance trends from recurring weekly or monthly cycles.

Anomalies often appear as large spikes in the residuals plot. Detecting them isn’t the same as removing them. In non-stationary time series, variability changes over time, and outright removal breaks the time index and biases seasonal impact. A more robust approach is to replace anomalies with expected values, preserving the time series rows and protecting the forecasting baseline.

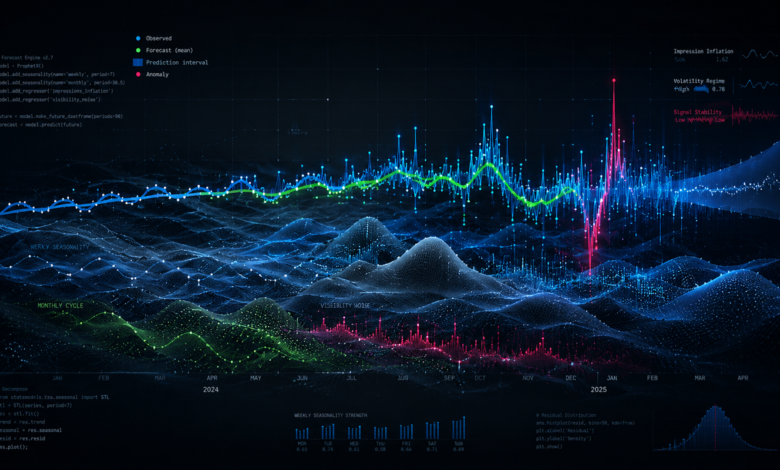

With a clean dataset, you can apply Prophet, built by Meta for real-world data. It handles multiple seasonalities, missing data, and abrupt shifts. Using an anomaly-free dataset with a preserved time index, Prophet can forecast click performance over the next 90 days. You can also introduce a regressor to flag external factors like Google core updates or measurement issues, such as the Search Console logging issue that inflated impressions from May 2025 to April 2026.

The output includes a line chart and a tabular forecast with lower and upper bounds representing the confidence interval. This range shows where clicks are expected to fall over the forecast horizon.

SEO forecasting isn’t about projecting neat, linear trends. It’s about understanding messy, non-stationary data shaped by seasonality, anomalies, and external shocks. By cleaning data properly, modeling seasonality, and accounting for real-world distortions like SERP changes and tracking issues, forecasts become less about false certainty and more about informed direction. The goal isn’t perfect accuracy, but a robust approach to forecasting non-stationary time series is essential for framing stakeholder expectations within a realistic range and making better decisions.

(Source: Search Engine Land)