AI Search Reputation Risks: How to Protect Your Brand

▼ Summary

– AI search tools like Google AI Overview now synthesize multiple sources into single answers, often flattening nuance and overrepresenting certain viewpoints.

– This shift enables “zero-click” behavior where users accept AI answers without visiting original sources, reducing the influence of traditional high search rankings.

– AI narrative formation involves pooling sources from platforms like Reddit and social media, weighting signals by volume over authority, and compressing information into simplified summaries.

– These AI-generated narratives are reinforced as they are shared and repeated online, becoming inputs for future AI outputs and amplifying reputational risks.

– Effective reputation management now requires auditing AI outputs, correcting misinformation at its source, and strengthening high-quality, structured content for AI systems to use.

The digital landscape for brand reputation has fundamentally changed. AI search platforms like Google’s AI Overview and ChatGPT are now the primary gatekeepers of information for millions. Instead of presenting a list of links, these systems synthesize content from across the web into a single, authoritative-sounding answer. This shift creates a critical new vulnerability: a brand’s carefully managed online presence can be instantly overridden by an AI-generated narrative that may be incomplete, outdated, or misleading. Visibility no longer equates to control, making traditional SEO tactics insufficient for protecting how a company is perceived.

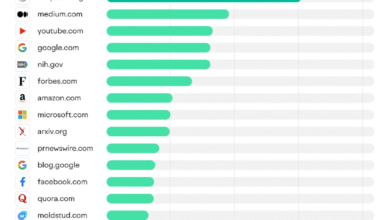

This process, which we can term AI narrative formation, operates through a distinct mechanism. First, AI models engage in source pooling, gathering data from a vast array of places. While they may access reputable sites, they frequently pull just as heavily from social media, forums like Reddit, and review platforms. Next comes signal weighting, where volume often trumps veracity. A handful of negative comments on a popular thread can outweigh a library of positive, verified reports. The system then applies narrative compression, distilling complex realities into a brief summary where nuance is lost and outlier opinions can become defining themes. Finally, continued reinforcement occurs as these AI summaries are shared across the internet, creating a feedback loop where the narrative solidifies with each repetition.

Consider the case of a financial services firm we’ll call Company X. For years, its search engine results pages showed a strong reputation with positive reviews and a professional website. The introduction of Google’s AI Overview altered that picture dramatically. When users asked for opinions on the company, the AI surfaced an old Reddit thread filled with customer service complaints that had been resolved nearly a decade prior. By compressing these outdated sources, the AI presented a “mixed reviews” narrative that instantly reshaped public perception, demonstrating how historical data can be misrepresented as current reality.

This example highlights why AI search amplifies reputational risk. Previously, damaging content often required effort to find. Now, large language models can surface defamatory or incorrect claims instantly. While AI hallucinations are a known issue, confidently presented misinformation is difficult for the average user to spot. The resulting snowball effect is powerful, as these narratives are screenshot and shared, creating a wave of negative sentiment that reputation managers must then confront. A central truth of modern online reputation management is that the most repeated claim, not the most accurate one, tends to dominate.

Protecting a brand requires a proactive audit of these AI narratives. A structured approach is essential. Begin by mapping queries, asking AI platforms direct questions about your brand or executive to see what answers they generate. Next, capture the outputs to identify the specific claims being made. Then, delve through the sources the AI relied upon, assessing their quality, recency, and context. The fourth step is to analyze the narrative gap between the AI’s summary and the factual reality. Finally, execute a strategy of correcting and replacing sources by directly addressing false claims on the platforms where they live and flooding the ecosystem with accurate, structured content.

This new environment demands a paradigm shift. Reputation is no longer just an input to manage, it is an output to shape. The goal is no longer simply to rank highly, but to directly influence the answers AI systems deliver. This requires a focus on strengthening the inputs these models use. Brands must prioritize publishing high-quality first-party content, earning credible third-party mentions, and actively reinforcing positive customer reviews. Addressing misinformation directly, improving structured data markup, and ensuring accuracy on key platforms like Wikipedia are now non-negotiable components of a robust defense. In the age of AI search, a brand’s story is being written in real-time by algorithms, making active narrative management the most important business imperative.

(Source: Search Engine Land)