Google’s New Feature Translates Languages Through Your Headphones

▼ Summary

– Ty Pendlebury is an editor who works for CNET, focusing on TV and home video equipment.

– He began his career at CNET Australia in 2006 and later moved to New York City for CNET in 2011.

– His primary work involves testing, reviewing, and writing about the latest TVs and audio gear.

– Outside of work, his personal interests include playing Call of Duty and exploring various cuisines.

– He owns a cat that is named after a television model he considers one of the best ever made.

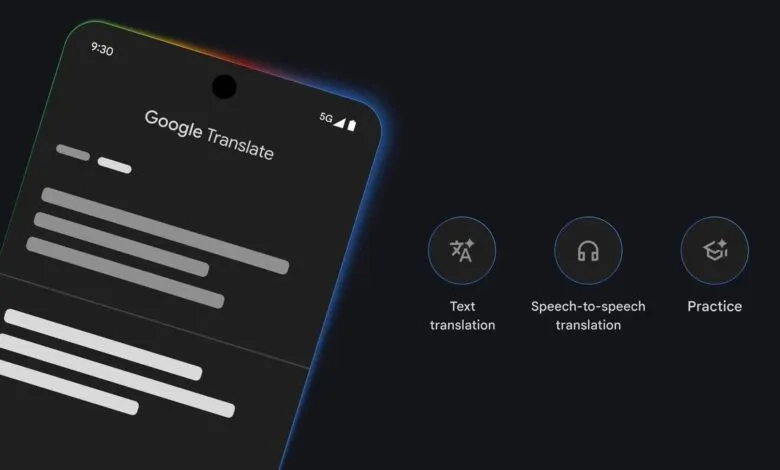

Imagine a world where language barriers simply dissolve during conversation. Google is bringing this closer to reality with a new feature that translates languages directly through your headphones. This innovative tool, currently in testing, aims to provide real-time, spoken translations, allowing users to understand and be understood in foreign languages without needing to look at a screen.

The feature leverages the power of Google’s existing translation technology but delivers it through a more seamless, auditory experience. Users would wear their compatible headphones, and the spoken foreign language would be translated into their native tongue in near real-time. Similarly, their own speech could be translated for the person they are speaking with, facilitating a natural back-and-forth dialogue. This hands-free, eyes-free approach could revolutionize travel, international business, and everyday interactions for people around the globe.

While the core translation engine is well-established, integrating it smoothly into a live audio stream presents significant technical challenges. The system must process speech, translate it accurately, and play it back with minimal delay to keep a conversation flowing naturally. Factors like background noise, accents, and overlapping speech add layers of complexity that the developers are actively working to overcome. The success of the feature will heavily depend on its speed and accuracy in diverse, real-world environments.

Privacy and data handling are also critical considerations. Since the translation process likely involves sending audio data to the cloud for processing, users will want clear assurances about how their conversations are handled and stored. Google will need to be transparent about its data policies to build trust and encourage widespread adoption of this personal translation tool.

The potential applications are vast. Tourists could navigate foreign cities with ease, asking for directions or ordering food without confusion. Professionals could engage in meetings with international colleagues more effectively. Immigrants and language learners might find it an invaluable aid for daily communication and practice. By moving translation from the screen to the ear, Google is attempting to make cross-language interaction as simple as having a normal conversation.

This development is part of a broader trend toward more ambient and integrated computing, where technology assists us without demanding our constant visual attention. As the feature progresses from testing to a public release, its performance, supported languages, and compatibility with various headphone models will be key details to watch. If successful, it could mark a significant step toward a more connected and comprehensible world.

(Source: CNET)