Switching to Google’s Offline AI for 24 Hours: My Review

▼ Summary

– Google AI Edge Gallery is an app that runs large language models entirely on-device, requiring no internet connection after setup.

– The app provides total privacy, as all data and processing remain on the user’s phone, which creates a distinct psychological feeling of safety.

– Its performance is capable but slower than cloud-based AI, with more limited context and complex task handling, making it feel less suitable for power users.

– A key limitation is the lack of persistent conversation memory; closed chat threads do not retain full history like cloud services do.

– The app includes “Agent Skills,” a set of offline utility tools like a restaurant picker and QR code generator that function without a data connection.

The promise of completely private AI has long felt like a distant future, but a recent 24-hour experiment with Google’s AI Edge Gallery suggests it’s closer than we think. As a daily user of cloud-based assistants, I decided to see if an on-device large language model could handle my workflow without ever connecting to the internet. The experience revealed a compelling, though limited, new paradigm for artificial intelligence.

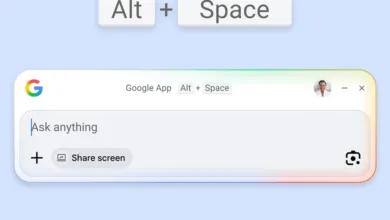

Setup was refreshingly straightforward. After downloading the app, I selected the Gemma 4 E2B model, a 2.5GB file that resides entirely on the phone. The immediate absence of login screens or data-sharing agreements was a stark contrast to typical services. With a toggle of Thinking Mode to see the AI’s reasoning, I switched on Airplane Mode and began my offline trial.

The most profound difference was psychological. There’s a distinct quietness to local AI processing that’s hard to quantify. Knowing that every query, from a messy first draft to a sensitive personal question, never leaves the physical device creates a powerful sense of security. The app also offers practical offline Agent Skills, turning it into a handy toolkit. I used features like a restaurant randomizer for indecisive moments and a QR code generator, tasks that normally require a data connection.

However, performance is a clear trade-off. On-device AI is not yet a cloud replacement. Responses were noticeably slower, especially with Thinking Mode active, as my phone’s processor handled all the computation. While it managed simple prompts well, complex creative tasks felt constrained. The model’s 32K token context window is modest compared to cloud offerings, making extended conversations less fluid. For power users, the speed and quality of output simply don’t match services like ChatGPT or Claude.

Another significant limitation is memory. Conversation history is not persistent in the same way. If you close a chat thread, the full dialogue doesn’t stick around for long-term reference, which is a major drawback for anyone who revisits old prompts. The cloud’s seamless syncing and vast memory remain superior.

For those curious to try it, the process is simple. Download the AI Edge Gallery app, install the recommended Gemma model, and enable Thinking Mode in the settings. The real test is switching to Airplane Mode to experience truly offline interaction. Exploring the Agent Skills section reveals the utility-focused potential beyond basic chat.

After a full day, could I abandon cloud AI? Not entirely. For intensive research, rapid output, and cross-device workflows, server-based models still dominate. The storage requirement is also a real consideration. Yet, this experiment proved local AI is now a usable tool. It excels for private brainstorming, sensitive queries, and offline productivity tasks, offering a tangible glimpse into a more self-contained and secure digital future.

(Source: Tom’s Guide)