Machine-Readable Brands Unlock Agentic AI Discovery

▼ Summary

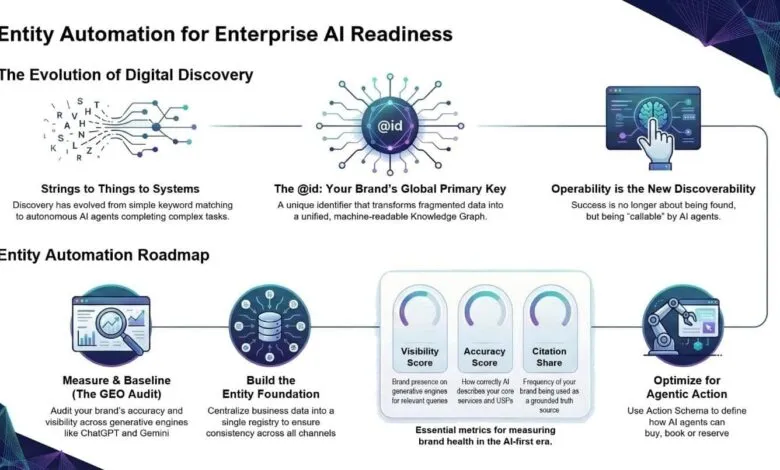

– Websites are now data sources for AI agents, shifting the key metric from human visits to whether AI uses and cites the content.

– Brands must structure their websites as machine-readable knowledge systems using schema and unique identifiers (@id) to be understood by AI.

– A consistent @id for core business entities acts as a global primary key, preventing data fragmentation and allowing AI to build an accurate knowledge graph.

– An entity automation lifecycle involves auditing AI visibility, ensuring content is crawlable, deploying schema correctly, and enabling AI-driven transactions.

– Ongoing monitoring of schema health and accuracy is required to maintain visibility as AI increasingly answers queries and completes actions without directing traffic to websites.

The digital landscape has fundamentally shifted. Artificial intelligence is no longer just a tool for users, it is becoming the primary user itself. With the rise of agentic commerce protocols like Google’s UCP and OpenAI’s ACP, the traditional website visit is becoming optional. The new imperative for every brand is to build a presence that is equally optimized for both human visitors and autonomous AI agents. Success is no longer measured by traffic, but by whether your content is accurately understood and utilized by these systems.

We are witnessing a transition from a web of pages to a web of entities and knowledge graphs. AI interprets relationships and concepts, not static HTML. To survive, your website must evolve into a structured knowledge system, powered by comprehensive schema markup and clear entity mapping. If an AI agent cannot definitively understand your brand, its products, and its capabilities, you effectively do not exist in the emerging agentic economy.

This evolution marks the third stage of digital discovery. We moved from the keyword era, focused on string matching and text density, to the semantic era, which recognized entities as persistent concepts. Now, we are in the agentic era, where AI consumes structured data on availability, inventory, pricing, and protocols to act autonomously. In this new paradigm, your brand’s entities function as its machine-readable API. The goal is no longer mere discoverability, but operability,enabling AI to not just find you, but to complete a transaction.

Building the Foundational Layer: Identity Resolution with @id

For machines to interact with your brand without error, they need an unambiguous technical foundation. The cornerstone of this is the unique identifier (@id). In the agentic web, this @id acts as a global primary key. It transforms isolated snippets of JSON-LD into consistent, reconcilable data that search and AI engines can use to construct a coherent knowledge graph for your brand.

Assigning persistent, canonical URLs to every core entity,your parent company, individual brands, headquarters, leadership, and entire product catalog,creates a robust entity layer across the digital ecosystem. This allows AI systems to consistently identify, connect, and reason about your business. As the industry shifts, with an estimated 80%-85% of AI workload moving to inference at scale, this entity layer becomes non-negotiable infrastructure. It ensures accuracy across millions of real-time interactions.

The @id serves as the critical reference anchor. It allows external systems to weave disparate pieces of brand information into a single, trustworthy representation. Without a consistent @id, AI agents face entity tension, forced to guess which fragmented data points are correct. This reduces your brand from a verified fact to a mere probability.

The solution is a centralized entity layer, a single source of truth that powers all owned channels. This involves several key practices:

- Centralizing core business information in one authoritative registry, pulling from verified sources like Google Business Profile.

- Connecting verified social profiles automatically to reinforce entity disambiguation.

- Supporting organizational hierarchies so updates to a parent company automatically propagate to all related child entities via the @id.

- Developing your brand’s entity lineage to create a machine-understandable structure.

Automating the Entity Lifecycle in Four Steps

Moving from manual SEO to a continuous, automated operating system requires a structured four-stage process.

1. Measure and Baseline with a GEO Audit Begin with a deep audit of your brand’s presence, accuracy, and sentiment across AI platforms like ChatGPT and Gemini. Identify citation gaps,where your brand is missing from trusted sources or mentioned without a link to your authoritative identity. These gaps create the entity tension that harms AI visibility. Traditional metrics like rankings are becoming obsolete; instead, establish baselines for a visibility score, accuracy score, citation share, and competitive gaps. The audit should also detect identity fragmentation and provide prioritized recommendations.

2. Enable Efficient Crawling and Discovery AI cannot automate what it cannot see. Maximizing impact requires ensuring your content is easily discovered and indexed.

- Maintain infrastructure health by not blocking AI crawlers, ensuring JavaScript-free accessibility, and optimizing page speed.

- Enable dynamic updates so your schema layer can reflect information changes, like a flash sale, in real-time across all properties.

- Implement progressive indexing with tools like IndexNow to ping crawlers instantly when your registry updates.

- Establish continuous monitoring to detect missing schema or broken entity references before they impact visibility.

3. Choose the Right Schema Deployment Model There is a key distinction between pure schema, which only marks up visible page content, and entity schema, which can be aspirational and tag related entities not explicitly mentioned. The right approach is truth-centered. Tagging a hotel’s state clarifies ambiguity, but over-aspirational tagging can bloat pages and attract irrelevant traffic. Deployment strategies vary:

- Client-side rendering injects schema via JavaScript, ideal for quick, large-scale deployment.

- Server-side rendering embeds JSON directly into the HTML response, better for enterprise environments needing centralized management.

- Strategic deployment should also include linking internal entities to external authorities like Wikidata for global disambiguation.

4. Enable Agentic Action from Discovery to Transaction True operability means facilitating direct transactions. Enrich your entities with action vocabularies that define what an agent can do.

- Implement PotentialAction schema to define machine-callable triggers for ordering, reserving, or scheduling.

- Include attribute personalization, such as verified accessibility features, so AI can filter for them.

- Provide protocol definitions within your schema for pricing, availability, and fulfillment logic.

This transforms your brand into a machine-callable service, providing the API for autonomous agents to transact without human intermediation.

Sustaining Visibility Through Monitoring and Reporting

Deployment is only the beginning. Ongoing monitoring ensures your entity graph remains accurate. Automation should facilitate regular review of:

- Schema implemented versus schema pending approval, with reasons for delays.

- Schema drift and entity opportunities to identify new pages for markup or existing pages needing enhanced entity coverage.

- Prioritized recommendations based on business impact.

- Overall schema health, tracking which types generate rich results and where additional markup could help.

The Self-Reinforcing AI Visibility Flywheel

Entity optimization is not a one-time project. It must be embedded across the entire customer journey through a seamless automation layer. This layer guides content toward key entities, personalizes schema for agentic experiences, enables transactions, and ensures sustained visibility. This creates a self-reinforcing authority engine,a flywheel where strong entity recognition begets more accurate AI citations, which further strengthens authority.

The web page is the human-readable surface, but the automated entity graph is the machine-readable backbone. Building and maintaining this strong entity layer is now a foundational requirement for AI visibility. Organizations that fail to automate entity management on a unified platform risk data decay and, ultimately, invisibility in the systems that will power the next generation of commerce.

(Source: MarTech)