Gemini App Control Launches: Order Lunch on Your Galaxy S26

▼ Summary

– Google’s Gemini AI now features agentic task automation, allowing it to perform actions within apps and websites on a user’s behalf.

– This capability enables Gemini to autonomously handle complex, multi-step tasks like planning a trip, which involves searching, comparing, and booking.

– The AI can manage the entire workflow, including navigating between different services and making decisions based on user preferences.

– This represents a shift from AI as a conversational tool to an active assistant that executes tasks directly in the digital environment.

– The feature is designed to save users significant time by automating tedious processes across various applications.

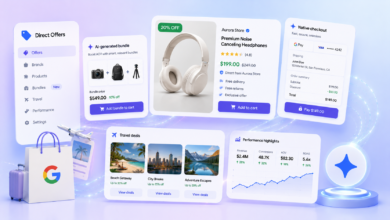

Google’s Gemini assistant is taking a significant leap forward with the introduction of app control capabilities. This new feature, often described as agentic task automation, allows the AI to directly interact with and operate other applications on your device. Imagine simply telling your phone to order your regular lunch or book a ride home, and Gemini handles the entire process within the relevant apps. This move represents a fundamental shift from an assistant that provides information to one that can execute complex, multi-step tasks on your behalf.

The core of this innovation is Gemini’s ability to understand natural language requests and then navigate through app interfaces just as a human would. It can tap buttons, enter text, and scroll through menus. For a user, this means issuing a command like “Order my usual lunch from DoorDash” could trigger Gemini to open the DoorDash app, locate your saved order, confirm the restaurant, and proceed to checkout, all without you touching the screen. This functionality aims to streamline everyday digital chores, saving time and reducing friction.

This development is particularly impactful for Samsung Galaxy users, as the integration is launching in conjunction with new device capabilities. The feature promises to make high-end smartphones even more powerful by turning them into proactive personal assistants. Beyond food delivery, potential use cases are vast. You could ask Gemini to post a drafted social media update, send a specific file to a colleague via a messaging app, or even control smart home devices through their respective applications.

The technology relies on advanced language understanding combined with on-device processing for certain actions to maintain speed and privacy. Google is positioning this not just as a convenience feature but as a step toward more intuitive and helpful ambient computing. The assistant learns from context and user preferences to better predict needs and execute tasks accurately. While the initial rollout focuses on popular apps and services, the ecosystem is expected to expand as developers optimize their applications for this new form of AI interaction.

For the average consumer, the promise is a smartphone that requires less manual navigation and more natural conversation. Instead of digging through menus and remembering passwords, you delegate the work to your AI. Of course, widespread adoption will depend on the reliability of the automation and user comfort with granting such permissions. If successful, this could redefine how we interact with our most personal devices, making them truly intelligent partners in managing daily life.

(Source: 9to5Google)