AI Platforms: A New Backdoor for Malware

▼ Summary

– AI assistants like Grok and Microsoft Copilot can be abused to secretly relay commands and data between attackers and compromised machines.

– Attackers can use the AI’s web interface to fetch malicious URLs and receive instructions, creating a stealthy, bidirectional communication channel.

– This method bypasses common security blocks as it uses trusted AI services without needing API keys or accounts, making it hard to trace or shut down.

– Researchers demonstrated the attack using a C++ program that interacts with the AI via a WebView component, which can be embedded in malware if missing.

– While AI platforms have safeguards, attackers can bypass them by encrypting malicious data, and Microsoft recommends defense-in-depth security practices to mitigate such risks.

Cybersecurity experts have uncovered a novel method for malware to communicate, using popular AI assistants as covert channels. Researchers at Check Point demonstrated that threat actors can exploit platforms like Grok and Microsoft Copilot to relay command-and-control (C2) traffic, effectively turning these trusted services into stealthy proxies. This technique allows malicious software to receive instructions and exfiltrate stolen data without directly connecting to a server controlled by the attacker, potentially bypassing traditional security measures.

The core of this attack involves manipulating the AI’s web interface. Instead of a compromised machine phoning home to an obvious C2 server, the malware is programmed to interact with an AI assistant. It instructs the AI to fetch content from an attacker-controlled webpage. The AI then summarizes or extracts the hidden commands from that webpage in its chat response, which the malware parses and executes. This creates a bidirectional communication channel that leverages the AI’s legitimacy, as traffic to and from these major platforms is often trusted and less likely to be blocked by network security tools.

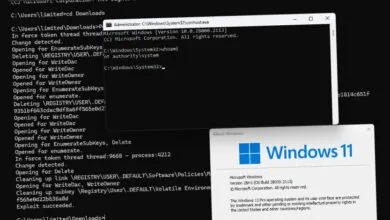

A critical aspect of this proof-of-concept is its use of the WebView2 component in Windows, which allows applications to display web content. The malware can use this to open a window pointing directly to an AI chat interface. Even if WebView2 is not present on a target system, attackers could bundle it within the malware itself. This method requires no API keys or user accounts for the AI services when using their public web interfaces, which significantly complicates defensive efforts. There is no straightforward API key to revoke or specific account to suspend, making the malicious channel more resilient.

To evade the AI platforms’ own content safeguards, attackers can simply encrypt their commands. By converting instructions into high-entropy, seemingly random data blobs, they can bypass filters designed to block obviously malicious text. The AI assistant will fetch and summarize the encrypted content from the attacker’s page, unwittingly delivering the decoded instructions back to the waiting malware.

Check Point’s research suggests this is just one potential misuse of AI services. Beyond acting as a communication relay, these platforms could be abused for operational tasks, such as analyzing a compromised system to determine its value or planning next-stage attacks while avoiding detection. The demonstration highlights a growing attack surface where AI’s capabilities are repurposed for malicious ends.

When approached for comment, Microsoft acknowledged the responsible disclosure from Check Point. A company spokesperson noted that attackers may attempt to use any available service on a compromised device, including AI-based ones. They emphasized the importance of defense-in-depth security practices to prevent initial infections and mitigate damage from any subsequent malicious activity. This finding underscores the continuous evolution of cyber threats and the need for security strategies to adapt to the innovative abuse of emerging technologies.

(Source: Bleeping Computer)