Google’s Gemini App Detects AI-Generated Videos

▼ Summary

– Google has expanded its AI verification feature to videos, allowing users to ask Gemini if a video was generated using Google’s own AI models.

– The tool scans for Google’s proprietary SynthID watermark and will specify the exact times it appears in the video or audio, not just give a yes/no answer.

– This video verification follows a similar capability launched for images in November, and both are currently limited to content made or edited with Google AI.

– Google claims its SynthID watermark is “imperceptible,” but its resilience to removal and detection by other platforms remains untested.

– The verification feature supports videos up to 100 MB and 90 seconds and is available wherever the Gemini app is offered.

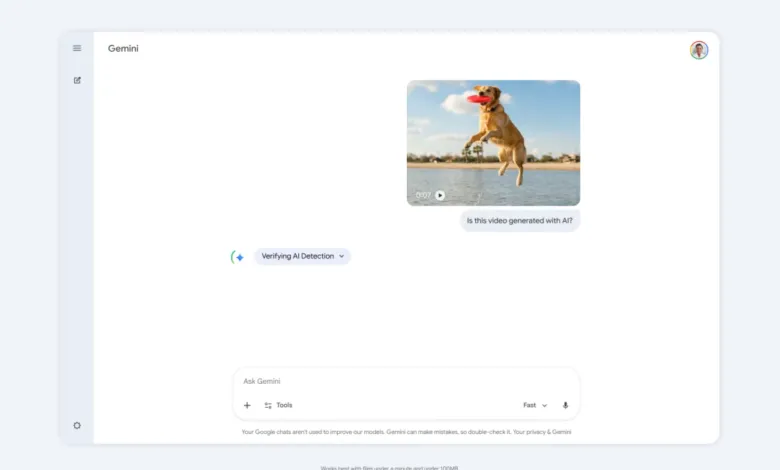

Google has broadened the capabilities of its Gemini app, introducing a new feature that allows users to verify whether a video was created or altered using Google’s own artificial intelligence models. This development marks a significant step in the ongoing effort to bring transparency to digital media. By simply uploading a video and asking, “Was this generated using Google AI?”, users can prompt Gemini to analyze the content for specific, embedded markers.

The system works by scanning both the visual frames and the audio track for a proprietary digital watermark known as SynthID. Google emphasizes that the response will provide more than a basic confirmation; it will pinpoint the exact moments within the video where this watermark is detected. This builds upon a similar functionality launched for images last November, which was also restricted to content produced with Google’s AI tools.

The challenge of reliably marking AI content is well-known. Other systems have proven vulnerable, as seen when watermarks were stripped from videos created by OpenAI’s Sora model. Google describes its SynthID watermark as “imperceptible” to human senses, but its resilience against removal and its recognition by other platforms remain open questions. While Google’s Nano Banano image generator within Gemini includes C2PA metadata, the current absence of a unified, industry-wide standard for labeling AI-generated material means deceptive content can still spread unchecked across social networks.

For now, the verification tool within Gemini can process video files up to 100 megabytes in size and 90 seconds in length. This feature is accessible in all regions and languages where the Gemini application itself is officially available, offering a widely accessible tool for initial media authentication.

(Source: The Verge)