Google’s TurboQuant AI Cuts LLM Memory Use by 6x

▼ Summary

– Google Research has developed TurboQuant, a compression algorithm that reduces the memory requirements of large language models while improving speed and preserving accuracy.

– The algorithm specifically targets the key-value cache, a memory-intensive component that stores important information to avoid recomputation during model operation.

– TurboQuant addresses the performance bottleneck caused by high-dimensional vectors, which are essential for representing complex data but consume significant memory.

– Unlike standard quantization techniques that often degrade output quality, TurboQuant’s early results show major performance gains and memory reduction without this loss.

– The process uses a subsystem called PolarQuant, which converts vectors into polar coordinates (radius and direction) to enable high-quality compression.

The immense computational demands of large language models are a primary driver behind today’s high memory prices. A new compression technique from Google Research, called TurboQuant, directly tackles this challenge by dramatically shrinking an LLM’s memory footprint while simultaneously accelerating its performance and preserving output quality. This innovation focuses on optimizing the model’s key-value cache, a critical component that functions like a digital reference sheet to avoid recalculating information during tasks.

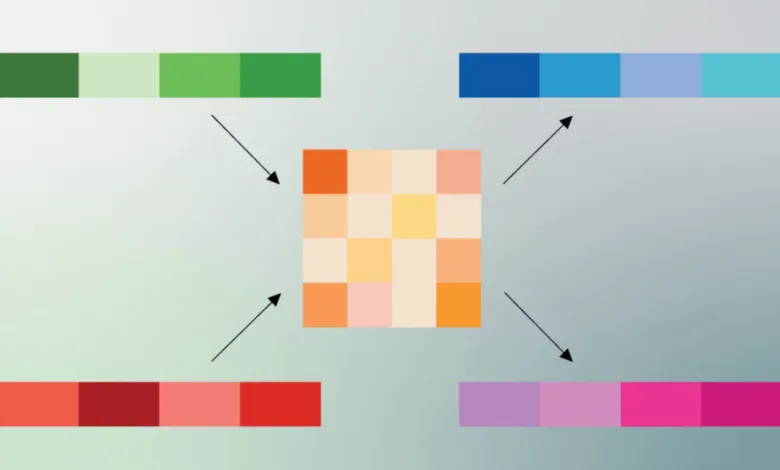

These models rely on complex mathematical representations known as vectors to process language. Vectors map the semantic meaning of words and phrases; when two vectors are similar, they share a conceptual relationship. However, these high-dimensional vectors with hundreds or thousands of data points consume substantial memory, especially within the key-value cache, creating a major performance bottleneck. A common solution is quantization, which reduces the numerical precision of calculations to save space and speed up processing. The trade-off has traditionally been a noticeable drop in the accuracy and quality of the model’s responses.

Google’s early testing indicates TurboQuant avoids that compromise. In certain benchmarks, the method delivered an eightfold increase in speed alongside a sixfold reduction in memory use without degrading output. The secret lies in a novel, two-stage compression process. The first phase employs a subsystem named PolarQuant. Instead of using standard XYZ coordinates to encode vectors, this technique converts them into a polar coordinate system. On this circular grid, each vector is distilled down to just two core pieces of information: a radius, representing the strength of the core data, and a direction, which captures the data’s essential meaning.

(Source: Ars Technica)