AI’s Code Rewriting: Does It Change the License, Too?

▼ Summary

– AI coding tools like Claude Code are creating new legal and ethical questions around the traditional “clean room” reverse engineering process for software.

– A developer used Claude Code to completely rewrite the popular ‘chardet’ Python library, releasing it under a more permissive MIT license.

– The original ‘chardet’ library, created by Mark Pilgrim in 2006, was licensed under the stricter LGPL, which limits reuse and redistribution.

– The developer stated the AI-assisted rewrite was done to fix licensing, speed, and accuracy issues, achieving a 48x performance boost in roughly five days.

– The original creator, Mark Pilgrim, argues the new version is an illegitimate relicensing and must retain the original LGPL license as a modification of his work.

The intersection of artificial intelligence and software licensing is creating complex new questions for developers and legal experts. A recent update to a widely-used Python library has brought these issues into sharp focus, highlighting the potential conflicts when AI assists in rewriting code originally governed by specific open-source terms. This situation forces a re-examination of how traditional concepts like clean-room design and derivative works apply in an age of automated code generation.

The controversy centers on chardet, a tool for detecting character encoding. Originally authored by Mark Pilgrim in 2006, the library was released under the LGPL (GNU Lesser General Public License). This license is a form of copyleft, meaning any modifications or derivative works based on the original code must also be distributed under the same LGPL terms, a requirement that helps preserve software freedom.

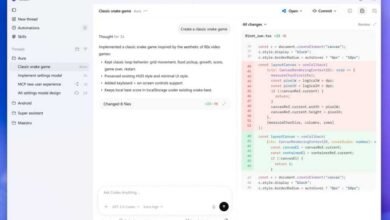

Maintainer Dan Blanchard recently unveiled version 7.0, describing it as a complete, ground-up rewrite created with the assistance of Claude Code, an AI programming tool. The new library, which boasts dramatically improved speed and accuracy, was released under the much more permissive MIT License. This license imposes far fewer restrictions, allowing for incorporation into proprietary, closed-source software. Blanchard stated that this overhaul, achieved in just a few days with AI help, addressed long-standing barriers to the library’s adoption, including performance and licensing concerns that previously prevented its inclusion in the Python standard library.

However, the relicensing sparked immediate debate. A GitHub user identifying as the original author, Mark Pilgrim, contested the move. The argument hinges on whether the AI-assisted rewrite constitutes a truly independent, new work or if it remains a derivative work of the original LGPL-licensed code. Pilgrim’s position is that the new version, being a functional modification and improvement of his original creation, does not escape the licensing obligations of its source. If it is deemed a derivative, the MIT license would be invalid for this distribution, and the LGPL’s terms would still apply.

This case underscores a significant gray area. Historically, “clean room” reverse engineering, where one team documents functionality and a separate, isolated team writes new code, has been a legally accepted method to create compatible software without infringing copyright. The question now is whether using an AI model trained on existing code, including the original chardet, to produce a faster or more efficient version qualifies as a similar, independent process or if the AI’s output is inextricably linked to its training data. The outcome could set an important precedent for how open-source licenses are interpreted and enforced in the era of AI-assisted development.

(Source: Ars Technica)