AI Physics Models Cut Engineering Design Time

▼ Summary

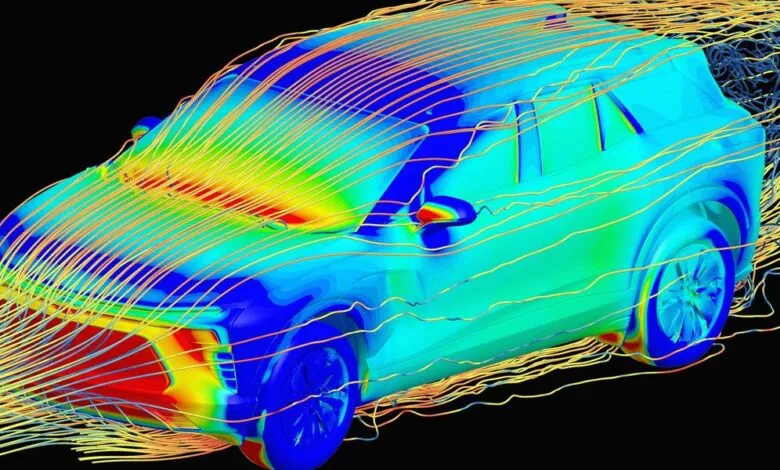

– Large physics models are AI tools that are beginning to replace or amend traditional physics simulations in industries like automotive and aerospace for design tasks.

– Companies like General Motors use these models to drastically speed up workflows, such as predicting a car’s aerodynamic drag coefficient in minutes instead of weeks.

– The accuracy of these AI models at the design stage is sufficient for exploration, with final validation still relying on physical tests like wind tunnels before production.

– Training these models varies by use case, but scaling laws suggest they improve and become more generalizable as they grow, similar to large language models.

– Experts debate whether AI will fully replace simulations, but agree it will augment engineers by automating manual tasks and enabling exploration of more design options.

The transformation of engineering design is accelerating. Just as large language models have reshaped software development, a new class of AI known as large physics models is now revolutionizing how physical products are conceived and refined. These advanced tools are beginning to augment, and in some cases replace, traditional physics simulation workflows, delivering dramatic reductions in development time across sectors like automotive, aerospace, and semiconductor engineering.

For decades, computer simulations drastically cut the need for costly physical prototypes. According to Thomas Von Tschammer of Neural Concept, this process is now being accelerated again. “AI is drastically reducing the need for simulation, the same way simulation reduced the need for physical prototypes,” he explains. This shift was a prominent theme at this year’s Nvidia GTC conference, where companies like Jaguar Land Rover and PhysicsX showcased their adoption of physics-based AI to streamline complex design processes.

Consider the traditional workflow at an automaker like General Motors. A designer would create a 3D model, which then spent roughly two weeks with aerodynamics specialists running simulations to calculate a critical coefficient of drag. Now, GM uses an in-house large physics model trained on historical simulation data. This AI can analyze a 3D model and return a drag coefficient in minutes instead of weeks. “We have experts… who can sit together and iterate instantly,” says Rene Strauss, GM’s director of virtual integration engineering. The speed gain is monumental; running AI inference can be 10,000 to a million times faster than a full simulation, according to PhysicsX CEO Jacomo Corbo.

A natural question arises regarding AI model accuracy. For early-stage conceptual design, absolute precision is less critical. GM’s Strauss notes that final certification still relies on physical wind tunnel testing. However, Corbo argues that with proper training data incorporating real-world measurements, AI models can potentially surpass the accuracy of the simulations they learn from. The key advantage is ease of correction; tuning an AI model with experimental data is often more straightforward than debugging a complex physics simulation.

This immense time saving unlocks a fundamental benefit: exploratory design. Engineers can rapidly evaluate a vastly broader range of concepts and configurations before committing to a final direction. The approach to training these models varies. Architectures may include transformers, geometric deep learning networks, or neural operators. Most current implementations are specialized, trained on a company’s proprietary simulation data for specific use cases, like different vehicle types. Yet the field is progressing toward more versatile foundational physics models. Corbo’s team is observing scaling laws similar to those in LLMs, where increasing model size and data leads to non-linear performance gains and greater generalizability.

Industry collaboration aims to foster this growth. Initiatives like the partnership between PhysicsX and Nvidia focus on developing open standards for data formats and model architectures, making it easier for researchers and engineers to build upon existing work. “The thing that we’re collaborating on is being able to compose architectures from building blocks,” Corbo states.

Integrating these powerful tools into established workflows remains an iterative challenge. “With any innovation, it’s not a straight line,” GM’s Strauss acknowledges. The long-term role of traditional simulation is also debated. Some, like Neural Concept’s von Tschammer, see AI as a complement for the early, exploratory phases, leading to “smarter usage of simulation” later. Others, like PhysicsX’s Corbo, envision a more fundamental shift: “The whole idea is to take numerical simulation… out of the workflow, and to move that to inference.”

Despite differing visions for simulation’s future, there is unanimous agreement on the enduring role of the human engineer. These AI tools are viewed not as replacements, but as powerful enablers that automate manual, low-value tasks. “What we’re seeing is that actually, these tools are empowering the engineers to be much more efficient,” Tschammer observes. By handling repetitive analysis, AI allows engineers to focus their expertise on higher-level design decisions and creative problem-solving, making their judgment more valuable than ever.

(Source: Ieee.org)