Instagram to notify parents of kids’ self-harm searches

▼ Summary

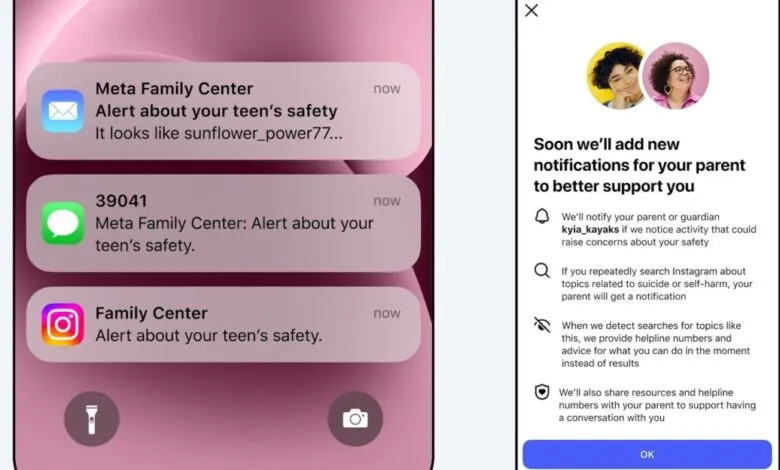

– Instagram will begin notifying parents when their teen repeatedly searches for terms related to suicide or self-harm within a short timeframe.

– This new feature is opt-in for parental supervision and is initially rolling out in the US, UK, Australia, and Canada starting next week.

– The platform’s policy is to block such searches and direct teens to support resources and helplines instead.

– Parental alerts will be sent via email, text, WhatsApp, or in-app notifications, which include guidance on discussing these topics.

– Meta also announced a similar alert system for its AI chatbots is planned for later this year.

Parents will soon receive notifications from Instagram if their teenager repeatedly searches for content related to self-harm or suicide. This new safety feature, launching next week in the United States, United Kingdom, Australia, and Canada, is designed to empower parents to step in when a young person’s online behavior may signal a need for support. The system requires both the parent and teen to have voluntarily enrolled in Instagram’s parental supervision tools, ensuring the alerts are sent only within families who have opted into the monitoring.

The platform emphasizes that most young users do not search for such harmful material. When they do, Instagram’s existing policy blocks the search results and instead directs the individual to professional resources and crisis helplines. The new alert system acts as an additional layer, informing a parent if their child makes multiple concerning searches within a short timeframe. The goal is to provide a discreet opportunity for a caring adult to initiate a conversation and connect their teen with help, while avoiding excessive notifications that could become background noise.

These parental alerts will be delivered through multiple channels, including email, text messages, or WhatsApp, based on the contact information provided. Accompanying in-app notifications will offer parents optional guidance on how to approach sensitive discussions about mental health with their child. Meta has also announced plans to introduce a similar alert system for its AI chatbots later this year, extending these protective measures across more of its services. The company expects to expand the Instagram feature to additional regions before the end of the year.

(Source: The Verge)