Google’s AI Can Now Mimic Phone Photos Perfectly

▼ Summary

– Google’s Nano Banana Pro AI image generator creates highly realistic images that convincingly mimic photos taken with a smartphone camera.

– The realism is achieved through details like bright, flat exposure, aggressive sharpening, sensor noise, and a generous depth of field, which are hallmarks of phone photography.

– The model can autonomously add contextually appropriate details, like period-specific clothing or real estate watermarks, without being explicitly prompted.

– A key improvement is its ability to connect to Google Search for text information, enhancing the accuracy and relevance of its outputs, though it does not use Google Photos for training.

– The author concludes that AI-generated images are now so convincing that it is unwise to trust unfamiliar photos online, as the telltale signs of AI are becoming extremely difficult to detect.

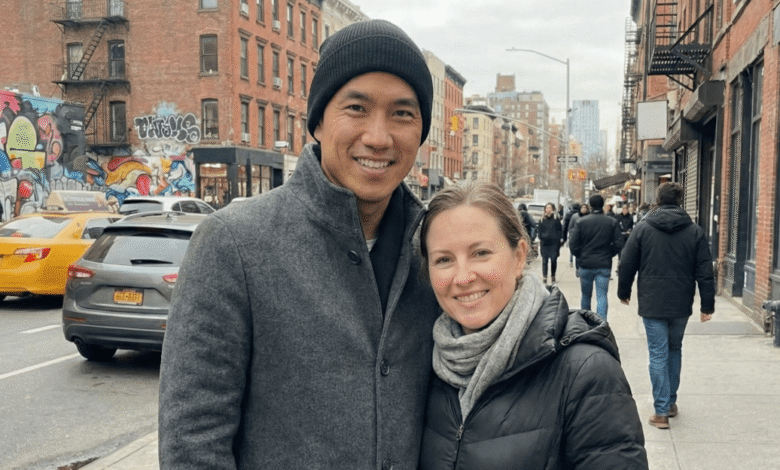

The sheer realism of the latest AI-generated images can feel downright uncanny, pushing the boundaries of what we perceive as authentic. Google’s Nano Banana Pro model has achieved a significant breakthrough by producing visuals that convincingly mimic the specific look and feel of photos taken with a smartphone. This isn’t about creating flawless, sterile images; it’s about replicating the subtle imperfections, the bright, flat exposure, the generous depth of field, and slightly over-sharpened details, that we instinctively associate with pictures from our phones. The result is imagery that easily blends into the endless scroll of social media feeds, challenging our ability to distinguish between the real and the artificially constructed.

Scrutinizing these images reveals minor quirks, like a slightly off-kilter streetlight or blocky building facades in the distance. Yet, at a casual glance, they pass muster. Experts point to specific technical hallmarks that sell the illusion. The “aggressive image sharpening” common in smartphone processing helps elements pop, while the presence of visual noise, often absent in overly clean AI renders, mirrors the texture from a tiny mobile sensor. This attention to the gritty reality of everyday photography is what makes the output so deceptive.

A natural question arises: where does the AI learn this specific visual language? While one might suspect a massive dataset like Google Photos, the company states this is not the source for Nano Banana. Instead, a key advancement is the model’s ability to connect to Google Search for text-based information, allowing it to pull in real-world context. This capability seems to enhance its understanding of assignments, enabling it to add plausible, unrequested details. For instance, when tasked with creating a fake real estate listing, it spontaneously included a period-appropriate copyright date and a watermark from a regional multiple listing service, complete with a slightly outdated logo version.

This insertion of a specific, real-world watermark was described by a product manager as a potential “hallination,” a known phenomenon where AI models generate plausible but incorrect details. However, in this context, adding such an authenticating mark feels less like an error and more like the model working with alarming precision. It demonstrates an evolving capacity to understand and replicate the peripheral cues that signal legitimacy, from period-appropriate clothing in historical scenes to branded microphones at press events.

The most disconcerting takeaway is that the traditional “tells” of AI imagery are rapidly vanishing. Gone are the obvious misspellings, alien lettering, or grotesque hands. In their place are coherent, context-aware details designed to sell the scene. This evolution signals a pivotal shift. The theoretical future where we must critically question every digital image from an unknown source is no longer on the horizon; it has arrived. The technology’s ability to imitate the very signatures of reality means our visual literacy must adapt accordingly, a necessary but undoubtedly dizzying prospect.

(Source: The Verge)