Trump’s AI Plan Puts Child Safety Responsibility on Parents

▼ Summary

– The Trump administration proposed a federal legislative framework to establish a uniform national AI policy, which would preempt and override stricter state-level AI regulations.

– The framework prioritizes innovation and a “light-touch,” pro-growth regulatory approach, seeking to prevent a patchwork of state laws it views as burdensome to industry.

– It places significant responsibility for issues like child safety on parents rather than platforms, with calls for congressional action but few clear, enforceable requirements for companies.

– The proposal strongly focuses on preventing government censorship of AI content, framing it as protecting free speech and lawful political expression from partisan agendas.

– Critics argue the framework centralizes power in Washington, shields AI developers from liability, and undermines states’ ability to act as early regulators of emerging AI risks.

The recent federal framework for artificial intelligence establishes a centralized national policy, aiming to override a growing patchwork of state regulations. This approach prioritizes unfettered innovation and a light-touch regulatory environment, arguing that conflicting state laws would stifle American competitiveness in the global AI race. The proposal significantly shifts responsibility for issues like child safety toward parents, while setting relatively soft expectations for technology platforms themselves.

Released on Friday, the legislative outline presents seven key objectives that champion scaling AI development under a uniform federal standard. It explicitly seeks to preempt stricter state-level rules, a move that follows an executive order from three months ago directing agencies to challenge “onerous” state AI laws. The framework describes AI development as an “inherently interstate” matter tied to national security, sharply limiting states’ roles to areas like general fraud statutes or zoning, rather than regulating the technology’s core development.

A central feature of the plan is a proposed liability shield for AI developers. It seeks to prevent states from penalizing companies for unlawful third-party conduct involving their models. Critics argue this structure, which lacks detailed enforcement mechanisms or independent oversight for novel harms, effectively centralizes all AI policymaking in Washington while closing off avenues for state-level experimentation and early risk mitigation.

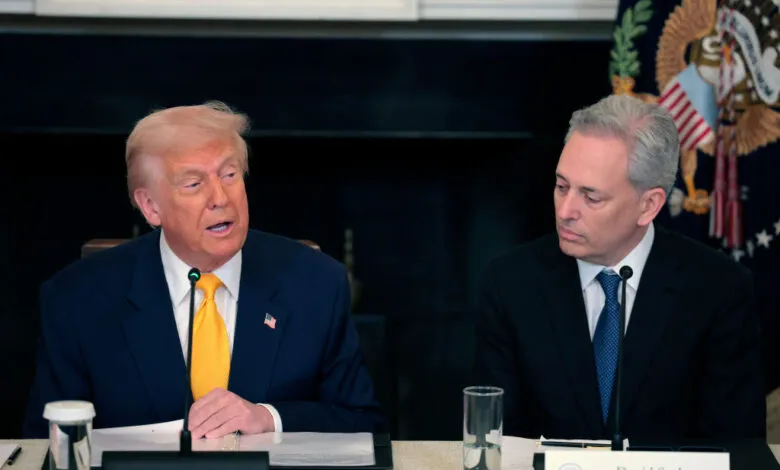

“White House AI czar David Sacks continues to do the bidding of Big Tech at the expense of regular, hardworking Americans,” said Brendan Steinhauser of The Alliance for Secure AI. “This framework seeks to prevent states from legislating on AI and provides no path to accountability for developers for the harms caused by their products.”

Conversely, many in the tech industry welcome the clarity of a single national standard. Teresa Carlson of General Catalyst Institute noted it is “exactly what startups have been asking for” to build and scale without navigating conflicting local laws.

On the contentious issue of child safety—a major flashpoint in current AI debates—the framework places emphasis on parental tools over corporate accountability. It states that “parents are best equipped to manage their children’s digital environment” and calls on Congress to provide them with controls for privacy and device use. While it says platforms should implement features to reduce risks like sexual exploitation, it uses qualifiers like “commercially reasonable” and does not establish clear, enforceable mandates.

Regarding copyright, the document seeks a middle path, acknowledging the need to protect creators while also allowing for the “fair use” of existing works to train AI systems. This language aligns with arguments made by AI companies facing numerous lawsuits over their training data.

The framework’s primary guardrails focus on free speech and preventing government censorship. It urges Congress to block federal agencies from coercing AI providers to “ban, compel, or alter content based on partisan or ideological agendas.” This emphasis on protecting “lawful political expression” builds upon a prior executive order targeting so-called “woke AI.” However, the vague distinction between censorship and necessary content moderation could complicate efforts to address misinformation or election interference.

Samir Jain of the Center for Democracy and Technology observed a contradiction, noting that while the framework opposes government coercion based on ideology, the administration’s own “woke AI” order appears to do precisely that. The proposal emerges alongside a lawsuit from AI company Anthropic, which alleges the Defense Department retaliated against it for refusing certain military applications, a case where the former President has publicly criticized the company.

Ultimately, the framework champions a pro-growth, accelerationist model for AI, seeking to remove barriers it deems outdated. By consolidating regulatory authority and emphasizing innovation, it sets the stage for a significant political and legal debate over who should govern the risks and rewards of this transformative technology.

(Source: TechCrunch)