GPT-5.4 Outperforms Humans by 83% in Professional Tests

▼ Summary

– OpenAI has released GPT-5.4, a new reasoning model that matches or outperforms human professionals 83% of the time on real-world tasks, according to its internal testing.

– This performance was measured using the GPTval test, which evaluates AI across 44 occupations in nine major industries that contribute significantly to the US economy.

– The model shows rapid improvement, with its 83% score representing a substantial jump from the 71% achieved by the GPT-5.2 model released just three months prior.

– GPT-5.4 introduces enhanced capabilities, including improved tool use, computer vision, native computer control, and advanced coding by integrating strengths from prior models.

– The release raises significant implications for the future of work, potentially augmenting human productivity but also posing a risk of replacement in high-skill, knowledge-based professions.

The latest iteration of OpenAI’s flagship model, GPT-5.4, demonstrates a staggering leap in capability, reportedly matching or exceeding the performance of human professionals in a comprehensive evaluation 83% of the time. This performance stems from a new benchmark test called GPTval, designed to measure AI effectiveness across economically significant real-world tasks. The assessment spans nine major industries and 44 distinct occupations, from finance and software development to healthcare and manufacturing, providing a broad view of the model’s practical utility.

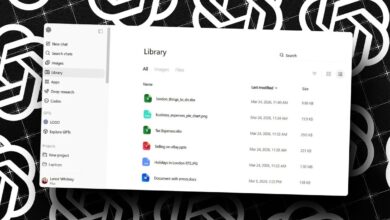

OpenAI describes GPT-5.4 as its most advanced and efficient model for complex professional work. Available through an API and rolling out to paid ChatGPT tiers and the Codex programming tool, this version promises significant improvements. The company states it is 18% less likely to contain errors overall, with individual claims 33% less likely to be false compared to its predecessor, GPT-5.2. This advancement in reliability is a critical step for professional applications where accuracy is paramount.

The naming convention, however, has caused some confusion. The release of GPT-5.4 comes swiftly after GPT-5.3 models, including a Codex variant and an “Instant” chat model. OpenAI clarifies that GPT-5.4 represents a mainline “Thinking” model that incorporates the frontier coding capabilities of GPT-5.3-Codex, justifying the version jump to simplify user choices within Codex. They indicate that “Instant” and “Thinking” model lines may evolve at different paces going forward.

The GPTval test’s construction is as noteworthy as the results. OpenAI collaborated with seasoned professionals to develop task sets that mirror day-to-day responsibilities in each occupation. These tasks underwent multiple rounds of expert review. For instance, one manufacturing engineering test involved designing a jig or fixture to manage cable spools in mining operations. Grading was conducted blindly by human professionals in each field, who did not know if they were evaluating AI output or the work of a peer. An automated grading system was later built based on these human evaluations to streamline future testing.

The progression of scores highlights a rapid pace of improvement. GPT-5.1 scored 38.8% on the GPTval test in November. By December, GPT-5.2 nearly doubled that performance to 70.9%. Now, GPT-5.4 has reached the 83% mark. Ethan Mollick, a Wharton professor, has called GPTval “probably the most economically relevant measure of AI ability.” The implication is profound: on tasks that take humans four to eight hours, the AI now wins the majority of head-to-head comparisons.

Industry feedback underscores the impact. Daniel Swiecki of Walleye Capital noted that on their most challenging internal finance evaluations, GPT-5.4 improved accuracy by 30 percentage points over prior models, significantly expanding automation potential for investors. This performance presents a dual-edged sword. It can powerfully augment human professionals, enabling them to accomplish more with greater speed. Conversely, it raises legitimate concerns about the potential displacement of high-skill, high-value jobs as AI capabilities mature.

Beyond overall test scores, GPT-5.4 introduces enhanced core functionalities. Its tool use is more refined, allowing AI agents to execute multi-step workflows with greater accuracy and efficiency. Computer vision capabilities are strengthened for interpreting complex images and documents. Perhaps most significantly, within the API and Codex, it introduces native computer-use abilities. This allows agents to interact with software systems directly using screenshots, keyboard commands, and mouse controls to automate workflows across applications. For coding, it merges the strengths of GPT-5.3-Codex with improved reasoning and tool use.

The arrival of such a capable model forces a moment of reflection for knowledge workers everywhere. The future likely holds a mix of augmentation and replacement, varying by role and industry. For many, the strategic response involves deepening their understanding of these tools and integrating them into workflows to enhance personal productivity and maintain a competitive edge. As GPT-5.4 becomes widely available, its real-world adoption will ultimately determine whether it serves primarily as an unparalleled assistant or as a formidable competitor.

(Source: ZDNET)