Pentagon Tested OpenAI Models Via Microsoft Despite Military Ban

▼ Summary

– OpenAI’s recent military deal has drawn internal criticism and calls for transparency from CEO Sam Altman, following the collapse of a similar Anthropic contract.

– OpenAI’s 2023 usage policy banned military use, but the Pentagon was already accessing AI models via Microsoft’s Azure OpenAI Service, which operates under separate terms.

– OpenAI removed its blanket ban on military use in early 2024 and later announced a partnership with Anduril for national security work, specifically on unclassified projects.

– The company declined a higher-risk partnership with Palantir for classified work but continues to work with them in other capacities.

– Dozens of OpenAI employees have expressed concerns in internal forums, questioning the reliability of their models for sensitive military applications.

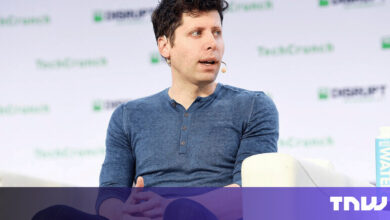

Recent scrutiny of OpenAI’s evolving relationship with the U.S. military highlights a complex history of shifting policies and corporate partnerships. CEO Sam Altman faced internal criticism after the company secured a defense contract, a move that followed the collapse of a similar deal involving rival Anthropic. Altman publicly acknowledged the situation appeared “sloppy,” but this episode is part of a broader pattern of ambiguous guidelines surrounding military applications of the firm’s artificial intelligence.

Back in 2023, the company’s official usage policy contained a clear prohibition against military use. Despite this, some OpenAI staff learned that the Pentagon had already begun testing models through Azure OpenAI Service, a Microsoft product offering access to the startup’s technology. Microsoft, a longtime defense contractor and OpenAI’s largest investor, holds broad licensing rights. The discovery raised immediate questions: did the corporate ban apply to a partner’s commercial offerings? Employees reported seeing Pentagon officials in the San Francisco offices, deepening their unease and confusion.

Spokespeople for both companies clarified that Azure OpenAI operates under Microsoft’s own terms of service, not OpenAI’s internal policies. A Microsoft representative stated the service became available to the U.S. government in 2023, though it was not approved for top-secret workloads until much later. An OpenAI spokesperson emphasized the importance of engaging with national security to ensure safe and responsible deployment, noting efforts to keep employees informed.

This ambiguity persisted until early 2024, when OpenAI formally revised its policies, removing the outright military ban. Many employees first learned of this significant change from external news reports, not internal communications. Leadership later discussed the cautious new approach in a company-wide meeting.

By the end of that year, OpenAI announced a partnership with defense technology firm Anduril for national security missions. Internally, the company assured staff this collaboration was narrowly scoped, focusing only on unclassified projects. This contrasted sharply with an agreement between Anthropic and Palantir for classified military work. OpenAI itself had declined an invitation to join Palantir’s “FedStart” program, deeming it too high-risk, though the companies now cooperate in other areas.

The Anduril announcement prompted dozens of concerned employees to organize a dedicated Slack channel. A core worry was that the company’s models, which some viewed as unreliable for basic commercial tasks, were fundamentally unsuited for high-stakes battlefield applications. The Department of Defense did not respond to requests for comment on these developments.

(Source: Wired)